Introduction

This project has been started from my desire to create a small program

on a surface computer (window 8 or Android tablet) which can recognize what my

5 years old daughter draws on it and helps her to study numbers and alphabet

characters. I know it is very hard work relating to machine learning and pattern recognition. The program may not be

completed until my daughter finishes her secondary school program but it is

good reason to me to spend my free time on it. At the present, the project has

achieved several good results such as: a

library for manipulating UNIPEN database, a library for creating a neural

network dynamically on runtime and some classes for character segmentation etc.

These archives have encouraged me to continue to develope the project as well as

to share it to community in order to help juniors easier to study pattern

recognition techniques in general and online handwriting

recognition techniques in particular.

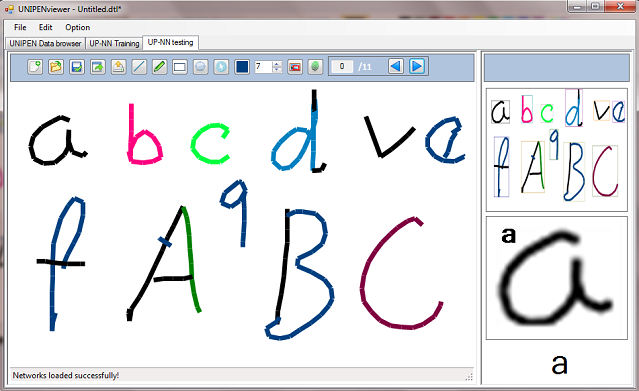

The demo can recognize not only digit but also letters on mouse drawing control by using multi neural network at the same time.

co

Picture 1a: Isolated character segmentation

<o:p>

<o:p>

Picture1b: convolution network for capital letters and digits recognition

Background

This library is divided to three parts:<o:p>

Part 1: UNIPEN – online handwriting training database library: it has several classes manipulating UNIPEN database, one of the most popular handwriting database over the world.

<o:p>

Part 2: Convolution neural network library: the library is organized based on neural network’s objects including: network, layer, neuron, weight, connection, activation function, forward propagation, back propagation classes. It is simple to a junior to create not only a traditional neural network but also a convolution network with smallest effort. Especially, the library also supports creating a network on runtime. So we can create or change different networks when the program is running.

Part 3: Image segmentation library: it is some functions for image pre-processing and segmentation. It is in developing process.

<o:p>

<o:p>

Picture 2: Character segmentation

These techniques have been introduced in previous topics “UPV – UNIPEN online handwriting recognition

database viewer control ” and ”Neural Network for Recognition of Handwritten Digits in C#”. However, this article is a synthesis of them which can bring a more general view to a handwriting recognition system. In this article I will highlight a method is used to get the UNIPEN data to the input of a recognizer. A convolution network for capital letters and numbers recognition also is described in order to explain how to use this library.

The UNIPEN and its format

Picture 2: UNIPEN data browser with function for capital letters and digits recognition.

In a large collaborative effort, a wide number of research institutes

and industry have generated the UNIPEN standard and database.

Originally hosted by NIST, the data was divided into two distributions, dubbed

the trainset and devset. Since 1999, the International UNIPEN Foundation (iUF)

hosts the data, with the goal to safeguard the distribution of the trainset and

to promote the use of online handwriting in research and applications. In the

last years, dozens of researchers have used the trainset and described

experimental performance results. Many researchers have reported well

established research with proper recognition rates, but all applied some

particular configuration of the data. In most cases the data were decomposed,

using some specific procedure, into three subsets for training, testing and

validation. Therefore, although the same source of data was used, recognition results

cannot really be compared as different decomposition techniques were employed. <o:p>

For some time now, it has been the goal of the iUF to organize a

benchmark on the remaining data set, the devset. Although the devset is

available to some of the original contributors to UNIPEN, it has not officially

been released to a broad audience yet. I have been no luck to work on

it.<o:p>

Due to UNIPEN trainset is collection of particular datasets from

different research institutes, these datasets are

decomposed using some specific procedure. However, my approach is a little bit

different; I tried to find some general points in the structure of these

datasets to create a procedure which can decompose all datasets in the trainset

correctly in most cases. <o:p>

<o:p>

The trainset is organized as follows:

cat nsegm nfiles

1a 15953 634 isolated digits

1b 28069 1423 isolated upper case

1c 61351 2145 isolated lower case

1d 17286 1222 isolated symbols (punctuations etc.)

2 122628 2735 isolated characters, mixed case

3 67352 1949 isolated characters in the context of words or texts

4 0 0 isolated printed words, not mixed with digits and symbols

5 0 0 isolated printed words, full character set

6 75529 3298 isolated cursive or mixed-style words (without digits and symbols)

7 85213 3393 isolated words, any style, full character set

8 14544 4563 text: (minimally two words of) free text, full character set

The UNIPEN format is described in here. The format is thought of as

a sequence of pen coordinates, annotated with various information, including segmentation

and labeling. The pen trajectory is encoded as a sequence of components .PEN

DOWN and .PEN UP, containing pen coordinates (e.g. XY or XY T as declared in

.COORD). The instruction .DT permits précising the elapsed time between two components.

The database is divided into one or several data sets starting with .START SET.

Within a set, components are implicitly numbered, starting from zero. Segmentation

and labeling are provided by the .SEGMENT instruction. Component numbers are

used by .SEGMENT to delineate sentences, words, characters. A segmentation

hierarchy (e.g. SENTENCE WORD CHARACTER) is declared with .HIERARCHY . Because

components are referred by a unique combination of set name and order number in that set, it

is possible to separate the .SEGMENT from the data itself.

<o:p>

<o:p>

In general, the format of a UNIPEN data file has KEYWORDS which are

divided to several groups like: Mandatory

declarations, Data documentation, Alphabet, Lexicon, Data layout, Unit system,

Pen trajectory¸ Data annotations. In order to get the information and

categorize these keywords, I built a collection of classes based on the above

groups which can help me to get and categorize all necessary information from data file.

<o:p>

Although the UNIPEN format based on KEYWORD

but it not fix in a specific order. I created a DataSet class like a storage racks, when a KEYWORD is found it will be categorized and put

to a correspondent rack. In the normal, each UNIPEN file contains

one or several Datasets. But, in most cases there is a DataSet in a file. My

library now focuses on this case only.

Getting training patterns (Pen trajectory bitmaps) from trainset using the library is very simple as follows:

private void btnOpen_Click(object sender, EventArgs e)

{

if (dataProvider.IsDataStop == true)

{

try

{

FolderBrowserDialog fbd = new FolderBrowserDialog();

DialogResult result = fbd.ShowDialog();

if (result == DialogResult.OK)

{

bool fn = false;

string folderName = fbd.SelectedPath;

Task[] tasks = new Task[2];

isCancel = false;

tasks[0] = Task.Factory.StartNew(() =>

{

dataProvider.IsDataStop = false;

this.Invoke(DelegateAddObject, new object[] { 0, "Getting image training data, please be patient...." });

dataProvider.GetPatternsFromFiles(folderName);

dataProvider.IsDataStop = true;

if (!isCancel)

{

this.Invoke(DelegateAddObject, new object[] { 1, "Congatulation! Image training data loaded succesfully!" });

dataProvider.Folder.Dispose();

isDatabaseReady = true;

}

else

{

this.Invoke(DelegateAddObject, new object[] { 98, "Sorry! Image training data loaded fail!" });

}

fn = true;

});

tasks[1] = Task.Factory.StartNew(() =>

{

int i = 0;

while (!fn)

{

Thread.Sleep(100);

this.Invoke(DelegateAddObject, new object[] { 99, i });

i++;

if (i >= 100)

i = 0;

}

});

}

}

catch (Exception ex)

{

MessageBox.Show(ex.ToString());

}

}

else

{

DialogResult result = MessageBox.Show("Do you really want to cancel this process?", "Cancel loadding Images", MessageBoxButtons.YesNo);

if (result == DialogResult.Yes)

{

dataProvider.IsDataStop = true;

isCancel = true;

}

}

}

After that, the patterns will be the training data to a neural network:

private void btTrain_Click(object sender, EventArgs e)

{

if (isDatabaseReady && !isTrainingRuning)

{

TrainingParametersForm form = new TrainingParametersForm();

form.Parameters = nnParameters;

DialogResult result = form.ShowDialog();

if (result == DialogResult.OK)

{

nnParameters = form.Parameters;

ByteImageData[] dt = new ByteImageData[dataProvider.ByteImagePatterns.Count];

dataProvider.ByteImagePatterns.CopyTo(dt);

nnParameters.RealPatternSize = dataProvider.PatternSize;

if (network == null)

{

CreateNetwork();

NetworkInformation();

}

var ntraining = new Neurons.NNTrainPatterns(network, dt, nnParameters, true, this);

tokenSource = new CancellationTokenSource();

token = tokenSource.Token;

this.btTrain.Image = global::NNControl.Properties.Resources.Stop_sign;

this.btLoad.Enabled = false;

this.btnOpen.Enabled = false;

maintask = Task.Factory.StartNew(() =>

{

if (stopwatch.IsRunning)

{

stopwatch.Stop();

}

else

{

stopwatch.Reset();

stopwatch.Start();

}

isTrainingRuning = true;

ntraining.BackpropagationThread(token);

if (token.IsCancellationRequested)

{

String s = String.Format("BackPropagation is canceled");

this.Invoke(this.DelegateAddObject, new Object[] { 4, s });

token.ThrowIfCancellationRequested();

}

},token);

}

}

else

{

tokenSource.Cancel();

}

}

Convolution

neural network

Theory of convolution network has been described in

my previous article and several others on Codeproject. In this article, I will only focus on

what development in this library compares to the previous program.

<o:p>

This library has been re-written

completely to fit my current requirement: easy to use to juniors who do not

need a deep knowledge on neural network; creating a neural network simply, changing network parameters without changing code and especially is the capacity of exchanging different networks on runtime. <o:p>

CreateNetwork function in previous program:

private bool CreateNNNetWork(NeuralNetwork network)

{

NNLayer pLayer;

int ii, jj, kk;

int icNeurons = 0;

int icWeights = 0;

double initWeight;

String sLabel;

var m_rdm = new Random();

pLayer = new NNLayer("Layer00", null);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 841; ii++)

{

sLabel = String.Format("Layer00_Neuro{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

pLayer = new NNLayer("Layer01", pLayer);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 1014; ii++)

{

sLabel = String.Format("Layer01_Neuron{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

for (ii = 0; ii < 156; ii++)

{

sLabel = String.Format("Layer01_Weigh{0}_Num{1}", ii, icWeights);

initWeight = 0.05 * (2.0 * m_rdm.NextDouble() - 1.0);

pLayer.m_Weights.Add(new NNWeight(sLabel, initWeight));

}

int[] kernelTemplate = new int[25] {

29, 30, 31, 32, 33,

58, 59, 60, 61, 62,

87, 88, 89, 90, 91,

116,117,118,119,120 };

0, 1, 2, 3, 4,

int iNumWeight;

int fm;

for (fm = 0; fm < 6; fm++)

{

for (ii = 0; ii < 13; ii++)

{

for (jj = 0; jj < 13; jj++)

{

iNumWeight = fm * 26;

NNNeuron n = pLayer.m_Neurons[jj + ii * 13 + fm * 169];

n.AddConnection((uint)MyDefinations.ULONG_MAX, (uint)iNumWeight++);

for (kk = 0; kk < 25; kk++)

{

n.AddConnection((uint)(2 * jj + 58 * ii + kernelTemplate[kk]), (uint)iNumWeight++);

}

}

}

}

pLayer = new NNLayer("Layer02", pLayer);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 1250; ii++)

{

sLabel = String.Format("Layer02_Neuron{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

for (ii = 0; ii < 7800; ii++)

{

sLabel = String.Format("Layer02_Weight{0}_Num{1}", ii, icWeights);

initWeight = 0.05 * (2.0 * m_rdm.NextDouble() - 1.0);

pLayer.m_Weights.Add(new NNWeight(sLabel, initWeight));

}

int[] kernelTemplate2 = new int[25]{

0, 1, 2, 3, 4,

13, 14, 15, 16, 17,

26, 27, 28, 29, 30,

39, 40, 41, 42, 43,

52, 53, 54, 55, 56 };

for (fm = 0; fm < 50; fm++)

{

for (ii = 0; ii < 5; ii++)

{

for (jj = 0; jj < 5; jj++)

{

iNumWeight = fm * 156;

NNNeuron n = pLayer.m_Neurons[jj + ii * 5 + fm * 25];

n.AddConnection((uint)MyDefinations.ULONG_MAX, (uint)iNumWeight++);

for (kk = 0; kk < 25; kk++)

{

n.AddConnection((uint)(2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(169 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(338 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(507 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(676 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

n.AddConnection((uint)(845 + 2 * jj + 26 * ii + kernelTemplate2[kk]), (uint)iNumWeight++);

}

}

}

}

pLayer = new NNLayer("Layer03", pLayer);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 100; ii++)

{

sLabel = String.Format("Layer03_Neuron{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

for (ii = 0; ii < 125100; ii++)

{

sLabel = String.Format("Layer03_Weight{0}_Num{1}", ii, icWeights);

initWeight = 0.05 * (2.0 * m_rdm.NextDouble() - 1.0);

pLayer.m_Weights.Add(new NNWeight(sLabel, initWeight));

}

iNumWeight = 0;

for (fm = 0; fm < 100; fm++)

{

NNNeuron n = pLayer.m_Neurons[fm];

n.AddConnection((uint)MyDefinations.ULONG_MAX, (uint)iNumWeight++);

for (ii = 0; ii < 1250; ii++)

{

n.AddConnection((uint)ii, (uint)iNumWeight++);

}

}

pLayer = new NNLayer("Layer04", pLayer);

network.m_Layers.Add(pLayer);

for (ii = 0; ii < 10; ii++)

{

sLabel = String.Format("Layer04_Neuron{0}_Num{1}", ii, icNeurons);

pLayer.m_Neurons.Add(new NNNeuron(sLabel));

icNeurons++;

}

for (ii = 0; ii < 1010; ii++)

{

sLabel = String.Format("Layer04_Weight{0}_Num{1}", ii, icWeights);

initWeight = 0.05 * (2.0 * m_rdm.NextDouble() - 1.0);

pLayer.m_Weights.Add(new NNWeight(sLabel, initWeight));

}

iNumWeight = 0;

for (fm = 0; fm < 10; fm++)

{

var n = pLayer.m_Neurons[fm];

n.AddConnection((uint)MyDefinations.ULONG_MAX, (uint)iNumWeight++);

for (ii = 0; ii < 100; ii++)

{

n.AddConnection((uint)ii, (uint)iNumWeight++);

}

}

return true;

} <o:p>

CreateNetwork function in current demo using this library:

private List<Char> Letters2 = new List<Char>(36) { 'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H',

'I', 'J', 'K', 'L', 'M', 'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z',

'0', '1', '2', '3', '4', '5', '6', '7', '8', '9' };

private List<Char> Letters = new List<Char>(62) { 'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H',

'I', 'J', 'K', 'L', 'M', 'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z',

'a', 'b', 'c', 'd', 'e', 'f', 'g', 'h', 'i', 'j', 'k', 'l', 'm', 'n', 'o', 'p', 'q', 'r',

's', 't', 'u', 'v', 'w', 'x', 'y', 'z', '0', '1', '2', '3', '4', '5', '6', '7', '8', '9' };

private List<Char> Letters1 = new List<Char>(10) { '0', '1', '2', '3', '4', '5', '6', '7', '8', '9' };

void CreateNetwork1()

{

network = new ConvolutionNetwork();

network.Layers = new NNLayer[5];

network.LayerCount = 5;

NNLayer layer = new NNLayer("00-Layer Input", null, new Size(29, 29), 1, 5);

network.InputDesignedPatternSize = new Size(29, 29);

layer.Initialize();

network.Layers[0] = layer;

layer = new NNLayer("01-Layer ConvolutionalSubsampling", layer, new Size(13, 13), 6, 5);

layer.Initialize();

network.Layers[1] = layer;

layer = new NNLayer("02-Layer ConvolutionalSubsampling", layer, new Size(5, 5), 50, 5);

layer.Initialize();

network.Layers[2] = layer;

layer = new NNLayer("03-Layer FullConnected", layer, new Size(1, 100), 1, 5);

layer.Initialize();

network.Layers[3] = layer;

layer = new NNLayer("04-Layer FullConnected", layer, new Size(1, Letters1.Count), 1, 5);

layer.Initialize();

network.Layers[4] = layer;

network.TagetOutputs = Letters1;

} <o:p>

In the current version, if I want to create a network which can recognize not only 10 digits but also alphabets (62 outputs total). I simply add some other layers and change some parameters as follows:

void CreateNetwork()

<pre> {

network = new ConvolutionNetwork();

network.Layers = new NNLayer[6];

network.LayerCount = 6;

NNLayer layer = new NNLayer("00-Layer Input", null, new Size(29, 29), 1, 5);

network.InputDesignedPatternSize = new Size(29, 29);

layer.Initialize();

network.Layers[0] = layer;

layer = new NNLayer("01-Layer ConvolutionalSubsampling", layer, new Size(13, 13), 10, 5);

layer.Initialize();

network.Layers[1] = layer;

layer = new NNLayer("02-Layer ConvolutionalSubsampling", layer, new Size(5, 5), 60, 5);

layer.Initialize();

network.Layers[2] = layer;

layer = new NNLayer("03-Layer FullConnected", layer, new Size(1, 300), 1, 5);

layer.Initialize();

network.Layers[3] = layer;

layer = new NNLayer("04-Layer FullConnected", layer, new Size(1, 200), 1, 5);

layer.Initialize();

network.Layers[4] = layer;

layer = new NNLayer("05-Layer FullConnected", layer, new Size(1, Letters.Count), 1, 5);

layer.Initialize();

network.Layers[5] = layer;

network.TagetOutputs = Letters;

} We can change all network parameters such as: number of layers, input pattern size, number of feature map, kernel size in convolution network, number of neuron in a layer, number of output...etc. to have the best network for us. Changing network is not influent to forward propagation or back propagation

classes.

<o:p>

Experiment

with the library:<o:p>

The demo program presents two main functions of the library: UNIPEN data browser and Convolution neural network training and testing. Of course the in put data is UNIPEN trainset which can be downloaded on the website: http://unipen.nici.kun.nl/. In order to the demo program can run correctly, the trainset folder have to be renamed to UnipenData.

Picture 4: UNIPEN data browser

We can simply select Data folder in UnipenData to browse all data. The recognition function can be active by loading a network parameters file. Depend on the network file the program can recognize digits only or all capital letters plus digits.

Picture 5: Convolution network training

The default convolution network is 62 outputs network. You can change the network by loading the attached network parameters files. In order to get corrected training data, for example to a 36 outputs network (a network for capital letters and digits) you should delete all folders in the Data folder except 1a,1b (a folder of digit and capital letters).

In my experiment, results are rather good with 88% accuracy to the collection of capital letter and digits or 97% to digits. I can not to do the experiment to 62 outputs network because my laptop was nearly burn when I trained the network.

Points of Interest

As a human brain, an artificial intelligent system can not create a unique neural network with billions neurons inside to solve different problems. It will contains several small networks which can solve seperated problems. My library has this capacity. So I do hope that it can be applied not only to my daughter's program but also to a real system in some day.

At the moment, this project is sponsored by my university as an annual small research. I am finding a donation or scholarship to continue it. It will be highly appreciated if someone interested in this project and can help it more developed.

The vote and comment to my article is welcome...

History

Library version: 1.0 initial code

Version 1.01: fix bugs (Unipen library can read NicIcon, UJI-Penchar files correctly), add character segmentation functions to Unipen library, fix bugs in neuron library. Previous network parameters are not compatible to current version. If anybody downloaded version 1.0 demo, please download all file again.

Version 2.0 which can recognize 62 characters on a mouse drawing control (picture 1) will be posted on comming article "Large pattern recognition system using multi neural networks"

TuDienTiengViet.Net | ![Image 10]() ![Image 11]() |

|

|

TuDienTiengViet.Net | ![Image 12]() ![Image 13]() |

|

|

TuDienTiengViet.Net | ![Image 14]() ![Image 15]() |

|

|

TuDienTiengViet.Net | ![Image 16]() ![Image 17]() |

|

|

TuDienTiengViet.Net | ![Image 18]() ![Image 19]() |

|

|

TuDienTiengViet.Net | ![Image 20]() ![Image 21]() |

|

|

TuDienTiengViet.Net | ![Image 22]() ![Image 23]() |

|

|