Introduction

When I started utilizing the cloud to host applications and solutions, it was always using one cloud provider at a time. Some companies I was in preferred to use AWS, some preferred Azure, others either private hosts or Google, etc. My own preference is for Azure, and generally I will advise managed services when going to the cloud for the first time - its a lower learning curve for existing .NET developers, great tooling integration, very robust and start off costs are low.

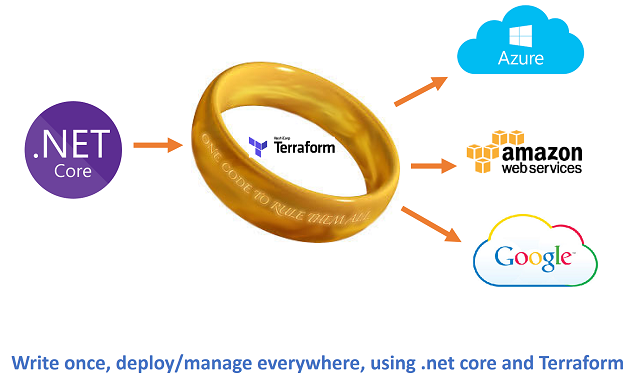

I've never been in a situation (until now) where I needed to design a solution that needed to have the capacity to run on multiple providers at the same time, where everything is interoperable - it's an interesting challenge. My approach is generally to try to use 'platform as a service' as far as possible, however in a current project, things have taken a different direction. We are aiming for a single code base and happen to have an experienced DevOps team available, so 'infrastructure as a service' has turned out to be an interesting option to allow me to design a 'code once, run anywhere' system. The concept is simple ... we need to have a 'startup template' that we can execute against *any* major cloud provider, and have this template spin up the network/clusters of virtual networks/machines/containers, etc. that we need, and then auto-scale on demand, all using a single code-base.

This article is the first in a series where I will outline the approach and technologies used - hopefully, it's of use to someone else!

Background

Platform as a service is pretty wonderful - mostly, it does exactly what you need. The great benefit is you just start coding, you don't have to worry about managing the underlying machines/networks/services that make everything hang together. Generally speaking, in a few clicks of a mouse and a bit of setup, the platform auto-scales and takes care of the nitty-gritty that can be a complete pain otherwise. Sometimes however, things go outside the norm and you need to roll up your sleeves. I find this usually happens when the technologies being used are not (yet) available as elements of the platform as a service.

My current project involves working with large volumes of data, ingesting 1.5TB+ of new data per day, and generating upwards of 35m new database rows from this every day. There is a very experienced operations team already in place, so they are happy to manage the specialist infrastructure needed. What I want to make sure however is that we automate as much as possible, across different cloud hosting platforms, and allow those clever ops guys to deal with serious issues and not get bogged down with daily chores.

For this project, we are managing the entire infrastructure ourselves. This means we need to create, manage, and scale services up/out and down on demand. Its actually not as daunting as one would think, there are very useful technologies out there to assist. There are three aspects to the automation, the first is management of virtual machines (called nodes), the second is controlling containers that the various services run inside, and the third is the containers themselves.

The main technologies I am using will be Docker for containers, Kubernetes to manage the containers and Terraform to manage the virtual machines (my thanks to the team in Microsoft Ireland for putting me onto this one!). As there will be underlying code orchestrating everything, and I want it to be true cross platform, I will be using .NET Core for this purpose. This initial article will talk about Terraform, where it fits in and how it can be used on Azure. Later articles in the series will look at the other moving parts and how everything pulls together.

Plain Old Cloud...

The first thing we are going to do is create a virtual machine and all of the supporting *stuff* it needs using the Azure Portal ... we're doing this to simply to see how easy it is, then we will take a step back and see what we need to do to automate this. For this, I am setting up a small Linux virtual machine, and just taking the default settings all the way, nothing fancy to see here....

The thing to note is what that simple "select & click" on Azure actually does ... sure, it creates the Virtual Machine, but it also puts in place a supporting resource group, storage area, network settings, sub-nets, public IPs, etc.

Having seen how easy it is using standard tools, and how Azure automates the heavy lifting for us, let's now look at the Terraform approach and how it can assist us in replicating this ease of use but across different cloud vendors.

So Why Terraform?

For sure, you could do this machine automation thing in .NET code, Powershell, etc, but for this use case, that's not the point. For my purposes, *in this project*, I need to deploy the same infrastructure over multiple cloud hosts (Azure, Aws, Alibaba, Google and some private clouds). One could argue a case for putting together some classes from the ground up yourself that just abstract base requirements to managed services (such as DocumentDB on Azure, DynamoDB on AWS, etc.), however, this moves away from the core project requirement of using the same base infrastructure and technologies on every cloud host that the DevOps guys can manage.

The benefit that Terraform provides is simple - it acts as middleware that orchestrates the management of Virtual Machines and other virtual resources on multiple cloud hosts, thus freeing the developer from having to manage different, constantly changing APIs when trying to manage a cloud infrastructure. Terraform is an open source library (written in GO language), that we interface with using a command line interface (CLI) and configuration files. The configuration files are in simple JSON format, and the instructions 'encoded' in the JSON payload can be specific to each host provider. The instructions in the payload are translated by Terraform as needed to manage virtual resources using the specific APIs of each cloud host. Terraform has basically done the heavy lifting for us already, it's open source, and it's well maintained, so I really don't need to worry about it, and prefer not to reinvent the wheel if I don't have to in this case.

Getting Started

To get things moving, we are first going to setup and create a base machine remotely. This will demonstrate the basic functionality. In the next article, we will expand on this and show how we can use .NET Core in Docker to help automate and orchestrate things further.

There are two things you need to download to get started.

- The Terraform CLI - you can download this from the Terraform.io website

- A Powershell script that kicks off things for authentication with Azure. I have attached a copy of this to the article for download (along with sample script files), you can also get it from the original github repo (many thanks to Eugene Chuvyrov for scripting it up!).

The Terraform CLI is a self contained console application that you run from the command line. It takes input parameters to determine what to do. I suggest downloading the file, creating a folder and unzipping into there. In my case, I have a general 'Data' folder in which I created a 'Terraform' folder and extracted to there. I also placed the Powershell script that you can download at the top of this article into the same folder.

Azure Authentication

The first thing we need to do is create some authentication for ourselves in Azure. Actually, this is not for ourselves, but for a specific internal only api/app purpose known as a 'service principal'. In short, doing this ensures that you don't use your main credentials to execute commands, and if you lose control, it's only to that service principal. So it's like a very restricted account of sorts (more information on service principals here).

To kick things off, we need to login to create these service principal credentials, for that, we will use the Powershell script attached to this article.

Note the param 'setup' after the script. After running this command, you are asked to accept or reject data collection, and then login to your Azure account:

Once you log in, you are prompted to enter a NAME for your app, and a password. When this is complete, you will be given a response in JSON that details settings you need for the Terraform setup config.

Terraform Azure config

Having created our service principal and received the settings we need from the script, we then start to build up our configuration file that Terraform will use to create the resources. If we refer back to what was auto created for us by the Azure portal click/build earlier, we get a fair idea of what we need to put in our config:

The Terraform config file consists a number of sections. At the top, we declare the 'Provider'. This is the cloud host in our case. In here, we give details that we received as JSON output when we ran the Powershell setup script above...

Terraform config files have a '.tf. extension. I created my first one simply called 'sample.tf'. It's a simple text file, and the format is very basic.

Provider Details

At the top of the file, I give the details for the provider, together with the details from JSON:

# Core init

provider "azurerm" {

client_id = "your client id in here"

client_secret = "your secret in here"

subscription_id = "your subscription value in here"

tenant_id = "your tenant id in here"

}

(Clearly you need to input your own values where indicated!)

Note the provider name 'azurerm". The format for other providers is different. You can get the full details of these on the Terraform docs page on providers.

From the top down, we then start building up a collection of particulars of what we want Terraform to create. If you already have resources existing with the same name/type, they will be skipped, else created. You can also force an over-write/re-build of virtual resources (check documentation for details).

Resource Group

The top level placeholder for all things Azure is the 'Resource group'.

# Setup resource group

resource "azurerm_resource_group" "Alias_RG" {

name = "Terraform-resource-group"

location = "North Europe"

}

Let's talk about some of the settings. On the top line, we have a comment, denoted by '#'. Next, we have the first token which tells Terraform what itype follows, in this case a 'resource'. As the previous section informed Terraform that follows is an Azure configuration, it then understands that the resource is related to this, and type declared is 'azurerm_resource_group'. Straight after this, we can give the item an alias, in this case, I name it 'Alias_RG' (for resource group). The 'name' gives the resource getting created a title, and the 'location' refers to the zone on the cloud provider where the resources should be created. I'm pretty sure all of the cloud providers have the concept of a location zone.

Network Settings

We next have a group of settings that refer to items needed to create the network resources required to sit the Virtual Machine we are building into. As before, you can use pre existing resources if you wish, I am simply creating everything from scratch here to show the build out of detail. Most of the individual values are self explanatory.

# Create virtual network within the group

resource "azurerm_virtual_network" "Alias_VN" {

name = "Terraform-virtual-network"

resource_group_name = "${azurerm_resource_group.Alias_RG.name}"

address_space = [

"10.0.0.0/16"]

location = "North Europe"

}

One key item to note above, is how we are using a template to reference the ALIAS we created for the resource group. I have highlighted this in bold.

Each alias is prefixed with the type that should be referenced:

# Create subnet

resource "azurerm_subnet" "Alias_SubNet" {

name = "subn"

resource_group_name = "${azurerm_resource_group.Alias_RG.name}"

virtual_network_name = "${azurerm_virtual_network.Alias_VN.name}"

address_prefix = "10.0.2.0/24"

}

and after the alias, the object member/property that should be referred to:

# create public IP

resource "azurerm_public_ip" "Alias_PubIP" {

name = "TestPublicIP"

location = "North Europe"

resource_group_name = "${azurerm_resource_group.Alias_RG.name}"

public_ip_address_allocation = "dynamic"

tags {

environment = "TerraformDemo"

}

}

We can also use variables in the script (same as declaring an Alias). This is very useful where we want to declare some top level naming convention and have this cascade through our template.

# create network interface

resource "azurerm_network_interface" "Alias_NIC" {

name = "tfnetinterface"

location = "North Europe"

resource_group_name = "${azurerm_resource_group.Alias_RG.name}"

ip_configuration {

name = "testconfiguration1"

subnet_id = "${azurerm_subnet.Alias_SubNet.id}"

private_ip_address_allocation = "static"

private_ip_address = "10.0.2.5"

public_ip_address_id = "${azurerm_public_ip.Alias_PubIP.id}"

}

}

Storage Account Settings

After the network settings, we then setup configuration for where both data and the virtual machine itself will be stored.

# create storage account

resource "azurerm_storage_account" "Alias_StorageAccount" {

name = "tfstorage1234xx"

resource_group_name = "${azurerm_resource_group.Alias_RG.name}"

location = "North Europe"

account_type = "Standard_LRS"

tags {

environment = "staging"

}

}

We use the same alias naming convention throughout. Also, of interest is we can introduce the concept of dependencies...

# create storage container

resource "azurerm_storage_container" "Alias_Storage" {

name = "vhd"

resource_group_name = "${azurerm_resource_group.Alias_RG.name}"

storage_account_name = "${azurerm_storage_account.Alias_StorageAccount.name}"

container_access_type = "private"

depends_on = ["azurerm_storage_account.Alias_StorageAccount"]

}

Virtual Machine Settings

Finally, we come down to setting out what we want for the virtual machine itself. As before, here we link this new item to other aliases for the resource group, networks, storage accounts, etc. In the operating system, profile, we also give the initial username/password credentials the machine should be setup with. We can use these later to test of everything worked. Note also the 'storage image reference' I am sending it to pick from, here we can be quite specific in our requirements, and use our own images as well as those predefined by the cloud host.

# create virtual machine

resource "azurerm_virtual_machine" "Alias_UBuntuVM" {

name = "terraformvm"

location = "North Europe"

resource_group_name = "${azurerm_resource_group.Alias_RG.name}"

network_interface_ids = ["${azurerm_network_interface.Alias_NIC.id}"]

vm_size = "Standard_A0"

storage_image_reference {

publisher = "Canonical"

offer = "UbuntuServer"

sku = "14.04.2-LTS"

version = "latest"

}

storage_os_disk {

name = "myosdisk"

vhd_uri =

"${azurerm_storage_account.Alias_StorageAccount.

primary_blob_endpoint}$

{azurerm_storage_container.Alias_Storage.name}/somevmdisk.vhd"

caching = "ReadWrite"

create_option = "FromImage"

}

os_profile {

computer_name = "hostname"

admin_username = "testadmin"

admin_password = "Password1234!"

}

os_profile_linux_config {

disable_password_authentication = false

}

tags {

environment = "staging"

}

}

Terraform Execution

We have our configuration complete, the next thing is to have Terraform set things up, and apply the config remotely for us. This is a two step process, first we 'plan' the job, then we 'apply' it.

At the command line, enter terraform.exe sample.tf (or whatever you name your config file).

After a second or so, Terraform will have pre-planned the job with output that should look similar to the following:

The last main step (fingers crossed!) is to 'apply' the plan to the cloud host. The 'apply' action works through the planned job, connects to the specified cloud host and uses the config plan to execute and create/modify the resources as required. When complete, the output should look something like the following:

So, did it work? ... let's take a look in the Azure portal...

Looking good! ... Ok, so that's the basics of Terraform. Further articles in this series will look at how we can automate and harness this using a .NET Core API, and then further extend orchestration using Docker and Kubernetes. As always, if you liked the article, please give it a vote at the top!

History

- 29th May, 2017 - Version 1