Introduction

Developing consistent and meaningful benchmark results for code is a complex task. Measurement tools exist (Intel® VTune™ Amplifier,

SmartBear AQTime, Valgrind) external to applications, but they are sometimes expensive for small teams or cumbersome to utilize. This project,

Celero, aims to be a small library which can be added to a C++ project and perform benchmarks on code in a way which is easy to reproduce, share, and

compare among individual runs, developers, or projects. Celero uses a framework similar to that of

GoogleTest to make its API easier to use and

integrate into a project. Make automated benchmarking as much a part of your development process as automated testing.

Background

The goal, generally, of writing benchmarks is to measure the performance of a piece of code. Benchmarks are useful for comparing multiple solutions to the same problem to select the most appropriate one. Other times, benchmarks can highlight the performance impact of design or algorithm changes and quantify them in a meaningful way.

By measuring code performance, you eliminate errors in your assumptions about what the "right" solution is for performance. Only through measurement can you confirm that using a lookup table, for example, is faster than computing a value. Such lore (which is often repeated) can lead to bad design decisions and, ultimately, slower code.

The goal in writing good benchmarking code is to eliminate all of the noise and overhead, and measure just the code under test. Sources of noise in the measurements include clock resolution noise, operating system background operations, test setup/teardown, framework overhead, and other unrelated system activity.

At a theoretical level we want to measure "t", the time to execute the code under test. In reality, we measure "t" plus all of this measurement noise.

These extraneous contributors to our measurement of "t" fluctuate over time. Therefore, we want to try to isolate "t'. The way this is accomplished is by making many measurements, but only keeping the smallest total. The smallest total is necessarily the one with the smallest noise contribution and closest to the actual time "t".

Once this measurement is obtained, it has little meaning in isolation. It is important to create a baseline test by which to compare. A baseline should generally be a "classic" or "pure" solution to the problem on which you are measuring a solution. Once you have a baseline, you have a meaningful time to compare your algorithm against. Simply saying that your fancy sorting algorithm (fSort) sorted a million elements in 10 milliseconds is not sufficient by itself. However, compare that to a classic sorting algorithm baseline such as quick sort (qSort) and then you can say that fSort is 50% faster than qSort on a million elements. That is a meaningful and powerful measurement.

Implementation

Celero heavily utilizes C++11 features that are available in both Visual C++ 2012 and GCC 4.7. This greatly aided in making the code clean and portable. To make adopting the code easier, all definitions needed by a user are defined in a celero namespace

within a single include file: Celero.h.

Celero.h has within it the macro definitions that turn each of the user benchmark cases into its own unique class with the associated test fixture (if any) and then registers the test case within a Factory. The macros automatically associate baseline test cases with their associated test benchmarks so that, at run time, benchmark-relative numbers can be computed. This association is maintained by TestVector.

The TestVector utilizes the PImpl idiom to help hide implementation and keep the include overhead of

Celero.h to a minimum.

Celero reports its outputs to the command line. Since colors are nice (and perhaps contribute to the human factors/readability of the results), something beyond std::cout was called for.

Console.h defines a simple color function, SetConsoleColor, which is utilized by the functions in the celero::print namespace to nicely format the program's output.

Measuring benchmark execution time takes place in the TestFixture base class, from which all benchmarks written are ultimately derived. First, the test fixture setup code is executed. Then, the start time for the test is retrieved and stored in microseconds using an unsigned long. This is done to reduce floating point error. Next, the specified number of operations (iterations) are executed. When complete, the end time is retrieved, the test fixture is torn down, and the measured time for the execution is returned and the results are saved.

This cycle is repeated for however many samples were specified. If no samples were specified (zero), then the test is repeated until it as ran for at least one second or at least 30 samples have been taken. While writing this specific part of the code, there was a definite "if-else" relationship. However, the bulk of the code was repeated within the "if" and "else" sections. An old fashioned function could have been used here, but it was very natural to utilize std::function to define a lambda that could be called and keep all of the code clean. (C++11 is a fantastic thing.) Finally, the results are printed to the screen.

Using the code

Celero uses CMake to provide cross-platform builds. It does require a modern compiler (Visual C++ 2012 or GCC 4.7+) due to its use of C++11.

Once Celero is added to your project. You can create dedicated benchmark projects and source files. For convenience, there is single header file and a CELERO_MAIN macro that can be used to provide a main() for your benchmark project that will automatically execute all of your benchmark tests.

Here is an example of a simple Celero Benchmark:

#include <celero/Celero.h>

CELERO_MAIN;

BASELINE(CeleroBenchTest, Baseline, 0, 7100000)

{

celero::DoNotOptimizeAway(static_cast<float>(sin(3.14159265)));

}

BENCHMARK(CeleroBenchTest, Complex1, 0, 7100000)

{

celero::DoNotOptimizeAway(static_cast<float>(sin(fmod(rand(), 3.14159265))));

}

BENCHMARK(CeleroBenchTest, Complex2, 1, 7100000)

{

celero::DoNotOptimizeAway(static_cast<float>(sin(fmod(rand(), 3.14159265))));

}

BENCHMARK(CeleroBenchTest, Complex3, 60, 7100000)

{

celero::DoNotOptimizeAway(static_cast<float>(sin(fmod(rand(), 3.14159265))));

}The first thing we do in this code is to define a BASELINE test case. This template takes four arguments:

BASELINE(GroupName, BaselineName, Samples, Operations)

GroupName - The name of the benchmark group. This is used to batch together runs and results with their corresponding baseline measurement.BaselineName - The name of this baseline for reporting purposes. Samples - The total number of times you want to execute the given number of operations on the test code.Operations - The total number of times you want to execute the test code per sample.

Samples and operations here are used to measure very fast code. If you know the code in your benchmark would take some time less than 100 milliseconds, for example, your operations number would say to execute the code "operations" number of times before taking a measurement. Samples defines how many measurements to make.

Celero helps with this by allowing you to specify zero samples. Zero samples will tell Celero to make some statistically significant number of samples based on how long it takes to complete your specified number of operations. These numbers will be reported at run time.

The celero::DoNotOptimizeAway template is provided to ensure that the optimizing compiler does not eliminate your function or code. Since this feature is used in all of the sample benchmarks and their baseline, it's time overhead is canceled out in the comparisons.

After the baseline is defined, various benchmarks are then defined. They syntax for the BENCHMARK macro is identical to that of the macro.

Results

The sample project is configured to automatically execute the benchmark code upon successful compilation. Running this benchmark gave the following output on my PC:

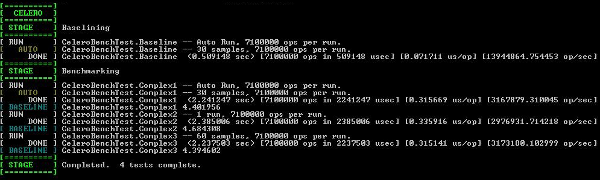

[ CELERO ]

[==========]

[ STAGE ] Baselining

[==========]

[ RUN ] CeleroBenchTest.Baseline -- Auto Run, 7100000 calls per run.

[ AUTO ] CeleroBenchTest.Baseline -- 30 samples, 7100000 calls per run.

[ DONE ] CeleroBenchTest.Baseline (0.517049 sec) [7100000 calls in 517049 usec] [0.072824 us/call] [13731773.971132 calls/sec]

[==========]

[ STAGE ] Benchmarking

[==========]

[ RUN ] CeleroBenchTest.Complex1 -- Auto Run, 7100000 calls per run.

[ AUTO ] CeleroBenchTest.Complex1 -- 30 samples, 7100000 calls per run.

[ DONE ] CeleroBenchTest.Complex1 (2.192290 sec) [7100000 calls in 2192290 usec] [0.308773 us/call] [3238622.627481 calls/sec]

[ BASELINE ] CeleroBenchTest.Complex1 4.240004

[ RUN ] CeleroBenchTest.Complex2 -- 1 run, 7100000 calls per run.

[ DONE ] CeleroBenchTest.Complex2 (2.199197 sec) [7100000 calls in 2199197 usec] [0.309746 us/call] [3228451.111929 calls/sec]

[ BASELINE ] CeleroBenchTest.Complex2 4.253363

[ RUN ] CeleroBenchTest.Complex3 -- 60 samples, 7100000 calls per run.

[ DONE ] CeleroBenchTest.Complex3 (2.192378 sec) [7100000 calls in 2192378 usec] [0.308786 us/call] [3238492.632201 calls/sec]

[ BASELINE ] CeleroBenchTest.Complex3 4.240175

[==========]

[ STAGE ] Completed. 4 tests complete.

[==========]

The first test that executes will be the group's baseline. This baseline shows that it was an "auto run", indicating that Celero would measure and decide how many times to execute the code. In this case, it ran 30 samples of 7100000 iterations of the code in our test. (Each set of 7100000 calls was measured, and this was done 30 times and the smallest time was taken.) This total measurement took 0.517049 seconds. Given this, it was measured that each individual call of the baseline code took 0.072824 microseconds.

After the baseline is complete, each individual test is ran. Each test is executed and measured in the same way, however, there is an additional metric reported: Baseline. This compares the time it takes to compute the benchmark to the baseline. The data here shows that CeleroBenchTest.Complex1 takes 4.240004 times longer to execute than the baseline.

Points of Interest

- The GitHub project is located

here.

- Benchmarks should always be performed on Release builds. Never measure the performance of a Debug build and make changes based on the results. The (optimizing) compiler is your friend with respect to code performance.

- Celero has Doxygen documentation of its API.

- Celero supports test fixtures for each baseline group.

- Executing this code while listening to the band "3" makes the benchmarks run faster. Funny thing that.

History

- January 2013 - First published.

- 31 January 2013 - GitHub updated with support for tabular output.