Reap garbage-collector-like advantages without the overhead by rapidly allocating from fixed sized pools of memory sequentially and then recycle the entire pool to make your heap use more efficient.

Introduction

I'm in the process of writing a rather involved JSON library for constrained environments, including the Arduino and compatible platforms. In the process, I found that if I wanted in-memory JSON trees, I needed some way to pool memory and allocate efficiently. Furthermore, I needed the developer to be able to wield total control over how much memory was reserved, and where it was reserved, due to the varying nature of memory on constrained platforms.

Just arbitrarily using new and delete is easy but harmful in cases where you don't have gobs of RAM to allocate or CPU cycles to waste on heap fragmentation. In this case, you need to take some control over where and how your memory gets allocated.

To add a wrinkle to this, the code should be as small as is reasonable because we have a limited amount of program space as well.

The whole goal here is to improve performance without adding a lot of complexity.

Conceptualizing this Mess

When you're running on little devices, everything matters. Nothing can be taken for granted, including your memory. We'd like to avoid using memory altogether, and that is the ideal situation for these devices, but obviously impossible. The next best thing is to reduce its use, and to control where the memory is/what kind of memory it is when you do allocate it.

Contrast that with garbage collected systems running on a paging OS, where memory concerns are almost an afterthought and they are totally different worlds. I was working for years in C# before coming back home to C++ and I was spoiled.

Garbage collected systems are notorious for being resource greedy, but they have an incredible performance advantage over traditional C/C++ heap models and that advantage comes in allocating memory. Traditional heaps usually keep some sort of linked list tracking used and free space. Allocation requires traversing that list. It doesn't seem like much but this adds up fast, and the more fragmented your heap gets, the longer it takes to traverse that list, because there are more chunks. Garbage collectors don't keep a linked list. They keep a simple pointer that they increment whenever an allocation is requested. When that pointer gets close to the end, a garbage collection occurs, compacting the heap and setting the pointer further away from the end again. The magic here is in the collection, and that's where all the work happens to make this function. Basically, what you save in upfront allocation time, you pay for in the back end with more complicated deletion/compaction.

A very nice side benefit of this allocation scheme is if we can guarantee it's always sequential, that means we can do things like allocate a string or vector as we go, without knowing how much room we need in advance. Each time we allocate, that pointer is at the tail end of our last allocation so the memory is contiguous. Used correctly, this can reap huge performance wins.

Well, what if we could have both this wonderful allocation scheme, and some kind of deletion/collection scheme that wasn't so complicated? If we could, we might be able to reap the benefits on constrained devices.

The question is what we can live without? We probably can't afford a garbage collector, but maybe we don't need one.

One problem is garbage collectors are very aggressive about allocating memory. They will reserve as much heap as they can up front. This is important for performance reasons but it doesn't really work well for constrained memory situations. Let's say we can define the size of our pool ourselves, and give them a hard limit. Let's say also that we can make multiple independent pools. Maybe that will give us some flexibility and realistic limits on what our memory allocation looks like.

So we have these small pools, whereas a garbage collector has one big one. The other thing we can't really afford with a garbage collector is tracing all of our object references. That's CPU intensive, complicated to code, and even optimized, it's probably not appropriate for our little device, at least in the general case.

How can we delete our objects then?

Is that the right question? In programming and life, sometimes the right question is worth 100 right answers.

What if the question was, do we need to delete our objects?

What's the alternative?

How about simply recycling the pool, effectively deleting all the objects at once?

Is this really practical? Consider the following scenario:

You want to pick through a large JSON document using a pull parser, and reading just a little bit of JSON at a time.

When you navigate to a section of interest, you load a small fragment of the JSON into an in-memory tree to query it as a unit. You allocate it to a pool you've dedicated for this.

When you're done with that operation, all the in-memory tree objects are no longer needed, so we can free them all at once and recycle the entire pool for the next fragment.

Do you get it? Great!

There are all kinds of scenarios you can handle this way - setup/allocate/prepare, execute, clean up. It's not uncommon. Again, the only real disadvantage is you can't free individual allocations. What this bakes out to is that editing/updating is not efficient. Say you are allocating a string. Now say you want to change that string to a new value. You can create a new string, but you cannot delete the old, so now you're using perhaps twice the memory or more and that's after a single edit. The question is, are you building a DOM? Are you editing objects? Probably not. Load once, use, throw away carries the day for us here.

I'll break this down for you:

Here's what we're after: A pooling, sequentially allocating memory buffer that we can place anywhere - in the heap, or in the stack.

Here's what we give up to get it:

- We can't dynamically reallocate more memory on the fly. We must have an upper capacity specified upfront. This isn't really such a bad thing, as distasteful as it might seem. If it seems distasteful, it's because you're used to coding on full fledged PCs. Again, we don't have gobs of RAM, (usually) multiple threads, or any of the other things that both allow for and tend to demand more memory. We're going back to the very basics here, and that's good for performance. The alternative is that we would have to provide some sort of callback whenever a reallocation happened so that the callee could offset any pointers it stored in that memory with the difference between the original and moved location. That's not only complicated, actually remapping those pointers is pretty much never efficient. That's exactly what makes compaction slow in a garbage collector. We need to avoid that.

- We can't delete items from the pool individually. We can only recycle the entire pool, invalidating all pointers from the pool at once. Again, this isn't such a bad thing if you design your code to be used such that it has a setup/allocate, execute, recycle/cleanup phase, which again is efficient. In concrete terms, rather than having a

remove() method on your List class, just have the push_back() or add() method and expect to clean up the list when you recycle the entire pool. Recycling the entire pool is as efficient as allocation is - actually slightly more. Remember, you're not creating interactive DOMs in this. For the most part, you're processing data. K.I.S.S. applies.

Coding this Mess

Coding against this is pretty simple, once you know how it works. You can create a StaticMemoryPool<C> class to allocate a pool whose maximum working size is known at compile time, or you can create a DynamicMemoryPool whose maximum working size isn't known until it is initialized. In the case of StaticMemoryPool<C>, this memory will be on the stack if it's declared locally, and on the global heap if it's declared as a global variable. DynamicMemoryPool always allocates its memory from the heap.

Allocating is dead simple, and works like malloc(). Give alloc() a size and it will reserve a block of memory from the pool and give you a void* to that memory or nullptr if there was no more room in the pool.

The neat thing about this is successive calls to alloc() will always return a contiguous, sequential pointer.

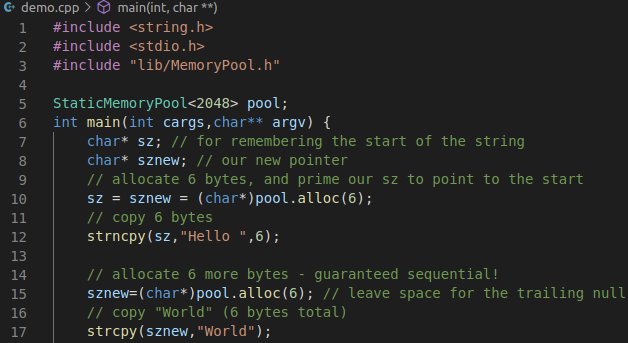

For example, this works (error checking removed for brevity):

char* sz; char* sznew; sz = sznew = (char*)memoryPool.alloc(6);

strncpy(sz,"Hello ",6);

sznew=(char*)memoryPool.alloc(6); strcpy(sznew,"World");

printf("%s",sz);

Freeing must be done by either destroying the class instance or by calling freeAll(). Calling freeAll() is how you recycle the pool.

Recycling the pool is useful if you want to perform a number of successive operations efficiently.

At any point, you can check the capacity() so you know the size of the pool, and used() will tell you how many bytes of the pool are reserved. next() will give you a peek at what the next allocation pointer will be, but it should not be used until alloc() is called. This method is primarily provided for consumers who need to know when the pool has changed for optimization.

It's necessarily simple code:

struct MemoryPool {

public:

virtual void* alloc(const size_t size)=0;

virtual void freeAll()=0;

virtual void* next()=0;

virtual size_t capacity()=0;

virtual size_t used()=0;

};

template<size_t C> class StaticMemoryPool : public MemoryPool {

uint8_t _heap[C];

uint8_t *_next;

public:

void* alloc(const size_t size) override {

if(used()+size>=C)

return nullptr;

void* result = _next;

_next+=size;

return result;

}

void freeAll() override {

_next = _heap;

}

void *next() override {

if(!C)

return nullptr;

return _next;

}

size_t capacity() override { return C; }

size_t used() override {return _next-_heap;}

StaticMemoryPool() : _next(_heap) {}

~StaticMemoryPool() {}

};

class DynamicMemoryPool : public MemoryPool {

uint8_t *_heap;

size_t _capacity;

uint8_t *_next;

public:

DynamicMemoryPool(const size_t capacity) {

if(0==_capacity) {

_heap=_next = nullptr;

}

_heap = new uint8_t[capacity];

_next=_heap;

if(nullptr==_heap)

_capacity=0;

}

void* alloc(const size_t size) override {

if(nullptr==_heap)

return nullptr;

if(used()+size>=_capacity) {

return nullptr;

}

void* result = _next;

_next+=size;

return result;

}

void freeAll() override {

_next = _heap;

}

void* next() override {

return _next;

}

size_t capacity() override { if(nullptr==_heap) return 0; return _capacity; }

size_t used() override { return _next-_heap;}

~DynamicMemoryPool() { if(nullptr!=_heap) delete _heap;}

};

Again, you can see the code is shockingly simple. Allocation is child's play, and the rest is cake too. It's all just basic pointer math and error handling.

You don't have to be afraid of allocation on these devices anymore. With the right tools, we can accomplish incredible things. Hopefully, this little tool helps keep your heap allocations under control and your code tight and efficient. Enjoy!

History

- 14th December, 2020 - Initial submission