ml_reader is streaming pull parser for doing simple parsing of markup documents like XML and HTML. It runs on IoT devices, and has a small footprint.

Introduction

Recently, I needed to parse some HTML from an IoT device. There really isn't anything out there for doing this, understandably as it's kind of niche, but also there is very little out there for IoT in terms of reading arbitrarily sized, and potentially large documents, even in XML, but certainly not HTML.

I also needed it to not do any validation or well-formedness checking. The reason being is I'm using it as part of a rendering engine. Rending does not need validation or well-formedness checking, and doing so is counterproductive.

There is nothing out there I can find with these requirements. That's why I built this. Please mind the limitations.

Conceptualizing this Mess

Let's start with some disclaimers and guidelines, followed by a basic description of what this code is.

Limitations

- This is a pull parser. They are very efficient, but can be a hassle to use, depending on what you're doing. For rendering, they are easy. For structured data navigation, they are not.

- This parser does minimal checking to make sure your document is compliant. It will only stop parsing if it can't figure out what to do next. Tags do not need to be balanced. It doesn't care what tag names are or where they appear. You can put an

<?xml?> declaration in 20 places throughout your document and it won't care. - This parser cannot handle XML namespace resolution, DTD resolution, schema resolution, or custom entity resolution. Basically, it doesn't do any behind the scenes mapping of your element and attribute data. If you want an XML namespace manager, you will need to create one yourself.

- This parser does no whitespace normalization. It's up to you to do the appropriate whitespace normalization to make your output compliant.

- It supports Unicode UTF-8 only, although internally it resolves everything to UTF-32 codepoints. The Unicode hasn't been tested that much, and it seems XML was mostly designed around UTF-16 codepoints?

- The HTML entity encoding support substantially increases the code size versus the XML only portion, by as much as 30kB-40kB.

When to Use This Reader

- You need a flat semi-structured stream of tags and text, usually for filtering, searching, or rendering.

- You need to scrape some HTML. See #1.

- You want to build an XML reader on top of it

- You just need a quick and dirty reader, and you're willing to live with the rough spots.

When NOT to Use This Reader

- You want access to highly structured XML data. (You must build XML conformance checking, add a stack and XML namespace and custom entity resolution support on top of this reader.)

- You want a rich, easy to use XML processor. (You must build higher level features like a DOM on top of this.)

- You want to turn HTML into XML. (You must build HTML conformance checking and add a stack.)

- You want to safely and reliably access SOAP and WSDL based web services. See #1.

What is a Pull Parser?

A pull parser is an efficient parser that parses incrementally, one step at a time. You typically call it in a loop, and in each loop iteration, you query various parser state to figure out where you are in the document and what you're looking at under the cursor. Using one looks something like the following:

while(reader.read()) {

switch(reader.node_type()) {

case ml_node_type::element:

break;

case ml_node_type::content:

break;

case ml_node_type::element_end:

break;

case ml_node_type::attribute:

break;

...

}

}

The advantages of using them are they don't require much memory, they work with forward only streaming sources like HTTP streams coming from the web, and they are generally speaking, pretty fast.

The disadvantage is that they can be difficult to use directly, although you can easily implement higher level code on top of it. The data you get back tends to come in a flat stream. To impose a heirarchal structure on that stream you'll often need to use a stack, pushing on element and popping on element_end, for example. In my use case, I don't need this additional structure so I wanted something that allowed me to work closer to the metal.

Pull Parsing Markup

To pull parse markup involves working with various markup document constructs like elements <foo>, end elements </foo>, attributes foo/foo="bar", comments <!--foo--> , processing instructions <?foo?> and notations <!foo>.

Furthermore, since this pull parser is designed to use very little memory and to avoid heap allocations, it instead will stream content - including attribute value content - as a series of chunks, meaning you will not get the entire value back in one read() call unless the entire contents can fit into your capture buffer. To keep the complexity from getting overwhelming, the parser will not stream names such as element or attribute names. The entire name must fit into the capture buffer but this limitation does not apply to element, document or attribute content. By default, the ml_reader uses a 1kB capture buffer, which is more generous, if anything. That means element and attribute names in the document can be up to 1kB in length, and that values and content can be that long as well without being broken up and returned a piece at a time.

Real World Parsing

Keep in mind a lot of the below won't seem immediately practical on an IoT device. The fact that I list the stuff below should not be read as an endorsement. I see very little reason to make an HTML pretty printer run on an Arduino, for example. However, I provided these uses below in the interest of completeness, because I wouldn't be so presumptious as to assume I could think of all the reasons you might want to pretty print HTML on an IoT device. I just covered everything I could think of, in broad strokes, whether or not I saw a use case there.

Rendering with this Mess

To render with this realistically, you'll probably need to keep some kind of context structure around while you loop. As you encounter open tags, like the <b> tag, you'll indicate in the context that bold is set. From thereon, you draw text in bold until you encounter a </b> tag. The whole document renders like that. You'll probably need a stack if you want to do CSS styles or really anything complicated. This is how commercial web browsers do it.

Searching and Scraping with this Mess

This is actually pretty easy to do with this. You simply loop and ignore everything but the relevant attributes or elements you want. For example, if I wanted to get all URLs out of a document (including images), I'd loop looking for href and src attributes and stash their attribute content.

Pretty Printing and Conformance Checking

These are actually very similar tasks under the covers, which is why I list them together. Either way, you'll have to build code to impose a hierarchy and verify the well-formedness of the input document. This will require a stack. You'll also have to be careful to narrow the sorts of entities it can accept if you're parsing XML, and to make sure that the attribute quotes are consistent.

Doing Data Exchange with this Mess

Oh you thought you wanted to use this for XML structured data, did you? Ha! Good luck. By itself, this reader doesn't even check to make sure your XML is well formed, much less conforming, and of course there is no validation. These features would be necessary to make your code safe and reliable. You can build them on top of this reader though, as I alluded to in the "Pretty Printing and Conformance Checking" portion. You'll probably also need some kind of XML namespace resolver code. If all you ever need is XML and mostly compliant XML at that, you may get more mileage out of this code here.

Coding this Mess

Below, we'll be doing something of a poor man's "identity transform" on a markup document. We read it from the input stream into a structured form, and we reproduce it on the output stream. Doing this basically allows one to demonstrate and test all (or at least most) of the parser features.

The identity transform isn't accurate. It doesn't do one important thing - it does not re-encode the entity references it had decoded before, so for example your won't be round-tripped. In several cases, this can lead to bad output, so do not use this sample code listed below in production. It is for testing and demonstration.

Parsing gets weird - pull parsing doubly so. One grotty thing about the code below - and honestly, if it was production code, I would have structured it better and duplicated less - is that the reader doesn't specifically indicate when it has advanced from an element to its contents, and the code has to contend with that. The problem comes when moving from attribute, attribute content, or element node types to an end tag, a nested tag, or nested content. The reader doesn't say "hey, we just advanced past the open tag, time to print a >!" because there's no good reason for it to in the general case. It only matters if we're actually trying to recreate the text of the markup into an output stream, which is almost never done in practice, unless you're pretty printing, and you're not doing that, are you?

Other than that, we have to be careful to remember that you can get attribute content multiple times for a single attribute, and also you can get attributes with no attribute content, such as with <input disabled>. You only get ml_node_type::attribute_content and ml_node_type::attribute_end if your attribute actually has content. You wouldn't get those calls for the tag above.

Aside from the value() member, we also look for attribute_quote() and is_empty_element() to determine which quote delimiter was used for the attribute, if any, and whether or not the element was terminated with />.

I've given you regular C++ code below. However, this will work on the Arduino framework, it's just that the lack of printf() would have made this code even longer:

const char* path ="ch01.xhtml";

file_stream fs(path);

if(!fs.caps().read) {

printf("couldn't find file\r\n");

return -1;

}

ml_reader mr(&fs);

int first_attr_val = 0;

ml_node_type ont = ml_node_type::initial;

int empty_elem = 0;

while(mr.read()) {

switch(mr.node_type()) {

case ml_node_type::comment:

case ml_node_type::pi:

case ml_node_type::notation:

case ml_node_type::element:

case ml_node_type::content:

if(ont==ml_node_type::attribute||

ont==ml_node_type::attribute_end||

ont==ml_node_type::element) {

if(empty_elem) {

printf("/");

}

printf(">");

}

break;

}

switch(mr.node_type()) {

case ml_node_type::comment:

printf("<!--");

break;

case ml_node_type::comment_end:

printf("-->");

break;

case ml_node_type::pi:

printf("<?%s ",mr.value());

break;

case ml_node_type::pi_end:

printf("?>");

break;

case ml_node_type::notation:

printf("<!%s ",mr.value());

break;

case ml_node_type::element:

printf("<%s",mr.value());

break;

case ml_node_type::attribute:

printf(" %s",mr.value());

first_attr_val = 1;

break;

case ml_node_type::attribute_content:

if(0!=first_attr_val) {

first_attr_val = 0;

if(0!=mr.attribute_quote())

printf("=%c",mr.attribute_quote());

else

printf("=");

}

printf("%s",mr.value());

break;

case ml_node_type::element_end:

if(!mr.is_empty_element()) {

if(ont==ml_node_type::attribute||

ont==ml_node_type::attribute_end||

ont==ml_node_type::element) {

printf(">");

}

printf("</%s>",mr.value());

} else {

printf("/>");

}

break;

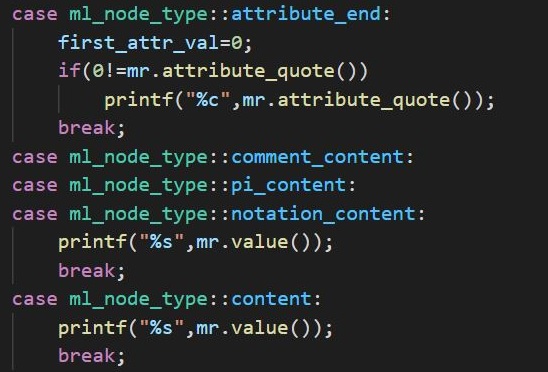

case ml_node_type::attribute_end:

first_attr_val=0;

if(0!=mr.attribute_quote())

printf("%c",mr.attribute_quote());

break;

case ml_node_type::comment_content:

case ml_node_type::pi_content:

case ml_node_type::notation_content:

case ml_node_type::content:

printf("%s",mr.value());

break;

case ml_node_type::notation_end:

printf(">");

break;

}

ont = mr.node_type();

if(ont==ml_node_type::element) {

empty_elem = mr.is_empty_element();

}

}

printf("\r\ndone!\r\n");

return 0;

Like my other recent offerings, this uses my stream library (included) to handle I/O. Once again, the STL isn't always available under IoT so that precludes using std::iostream<> because it's questionable how much of it is implemented under any given Arduino framework implementation.

Points of Interest

In ml_reader_fa.hpp, you'll find several large arrays of integers. These are deterministic finite state automata tables. What they do is they help decode entities like or A. At the bottom of that file is code to match text based on those state machines, of which there are two - one for XML and one that includes both HTML and XML. What it does is read characters out of a stream one at a time, and run the selected state machine over the input until it gets to an "accepting state." Once reached, the accepting state contains the Unicode codepoint that the entity translates to, so for example, will resolve to 0xA0.

I didn't hand build those arrays. That would have been next to impossible. Instead, I wrote a C# application, ml_entity_gen. It is based on the code for Rolex, a lexer generator I wrote last year for C# and VB.NET. However, I've gutted it for the code I needed and then built some quick and dirty routines to generate C++ arrays instead. I've included to code for curiosity's sake although if you want some legible code for the lexer generator stuff, download the Rolex project. The source in the above project is minimized for reasons.

History

- 25th July, 2021 - Initial submission