This article starts with an overview of what a typical computer vision application requires. Then, it introduces Pipeless, an open-source computer vision framework that offers a serverless development experience for embedded computer vision. Finally, it includes a step-by-step guide on the creation and execution of a simple object detection application with just a couple Python functions and a YOLO model. By the end of the article, you will understand how the Pipeless framework works and how to create and deploy applications with it in just minutes.

Introduction - Inside a Computer Vision Application

The art of identifying visual events via a camera interface and reacting to them.

The above sentence is what I would answer if someone asked me to describe what computer vision is in one sentence. But it is probably not what you want to hear. So let's dive into how computer vision applications are typically structured and what is required in each subsystem.

You typically need:

- Really fast frame processing. Note that to process a stream of 60 FPS in real-time, you only have 16 ms to process each frame. This is achieved, in part, via multi-threading and multi-processing. In many cases, you want to start processing a frame even before the previous one has finished.

- An AI model to run inference on each frame and perform object detection, segmentation, pose estimation, etc. Luckily, there are more and more open-source models that perform pretty well, so we don't have to create our own from scratch, you usually just fine-tune the parameters of a model to match your use case (we will not deep dive into this today).

- An inference runtime. The inference runtime takes care of loading the model and running it efficiently on the different available devices (GPUs or CPUs).

- A GPU. To run the inference using the model fast enough, we require a GPU. This happens because GPUs can handle orders of magnitude more parallel operations than a CPU, and a model at the lowest level is just a huge bunch of mathematical operations. You will need to deal with the memory where the frames are located. They can be at the GPU memory or at the CPU memory (RAM) and copying frames between those is a very heavy operation due to the frame sizes that will make your processing slow.

- Multimedia pipelines. These are the pieces that allow you to take streams from sources, split them into frames, provide them as input to the models and, sometimes, make modifications and re-build the stream to forward it.

- Stream management. You may want to make the application resistant to interruptions in the stream, re-connections, adding and removing streams dynamically, processing several of them at the same time, etc.

All those systems need to be created and maintained. The problem is that you end up maintaining code that is not actually focused on your application, but subsystems around the actual use case specific code.

The Pipeless Framework

To avoid having to build all the above from scratch, you can use Pipeless. It is an open-source framework for computer vision that allows you to simply provide your use case specific logic and takes care of everything else out-of-the-box.

Pipeless splits the applications logic into "stages", where a stage is like a micro app for a single model. It can include pre-processing, running inference with the pre-processed input, and post-processing the model output to take any action.

One of the best points of that architecture is that you can chain that structure as many times as you want for a stream, so you can chain several models that take care of different tasks.

To provide the steps to each stage, you simply add a code function, that is very specific to your application, and Pipeless takes care of running it when required. This is why you can think about Pipeless as a serverless framework for embedded computer vision. You provide a few functions and that's all, you don't have to worry about all the surrounding systems that you need.

Another great feature of Pipeless is that you can add, remove and update streams dynamically and this can be done via a CLI or a REST API to fully automate your workflows. You can even specify restart policies that indicate when the processing of a stream should be restarted, whether it should be restarted after an error, etc.

Finally, to deploy Pipeless, you just need to install it and run it along with your code functions on any device, whether it is in a cloud VM or containerized mode, or directly within an edge device like a Nvidia Jetson, a Raspberry, or any others.

Creating an Object Detection Application

Let's deep dive into how to create a simple application for object detection using Pipeless.

The first thing we have to do is to install it. Thanks to the installation script, it is very simple:

curl https://raw.githubusercontent.com/pipeless-ai/pipeless/main/install.sh | bash

Now, we have to create a project. A Pipeless project is a directory that contains stages. Every stage is under a sub-directory, and inside each sub-directory, we create the files for our functions. The name that we provide to each stage folder will be the stage name that we have to indicate to Pipeless if we want to run that stage for a stream.

pipeless init my-project --template empty

cd my-project

Here, the empty template tells the CLI to just create the directory, if you do not provide any template, the CLI will prompt you several questions to create the stage interactively.

As mentioned above, we now need to add a stage to our project. Let's download an example stage from GitHub with the following command:

wget -O - https://github.com/pipeless-ai/pipeless/archive/main.tar.gz |

tar -xz --strip=2 "pipeless-main/examples/onnx-yolo"

That will create a directory with the name onnx-yolo that contains our application functions and is the name of our stage.

Let's check the content of each of the stage files.

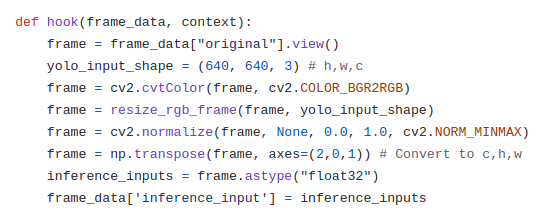

We have the pre-process.py file, which defines a function (hook) taking a frame and a context. The function makes some operations to prepare some input data from the received RGB frame in order to match the format that the model expects. That data is added to the frame_data['inference_input'] which is what Pipeless will pass to the model.

def hook(frame_data, context):

frame = frame_data["original"].view()

yolo_input_shape = (640, 640, 3)

frame = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)

frame = resize_rgb_frame(frame, yolo_input_shape)

frame = cv2.normalize(frame, None, 0.0, 1.0, cv2.NORM_MINMAX)

frame = np.transpose(frame, axes=(2,0,1))

inference_inputs = frame.astype("float32")

frame_data['inference_input'] = inference_inputs

... (some other auxiliar functions that we call from the hook function)

We also have the process.json file, which indicates Pipeless the inference runtime to use, in this case the ONNX Runtime, where to find the model that it should load, and some optional parameters for the inference runtime, such as the execution_provider to use, i.e., CPU, CUDA, TensortRT, etc.

{

"runtime": "onnx",

"model_uri": "https://pipeless-public.s3.eu-west-3.amazonaws.com/yolov8n.onnx",

"inference_params": {

"execution_provider": "tensorrt"

}

}

Finally, the post-process.py file also defines a function that takes the frame data and the context, like in the case of the pre-process.py. This time, it takes the inference output that Pipeless stored at frame_data["inference_output"] and performs the operations to parse that output into bounding boxes. Later, it draws the bounding boxes over the frame, to finally assign the modified frame to frame_data['modified']. With that, Pipeless will forward the stream that we provide but with the modified frames including the bounding boxes.

def hook(frame_data, _):

frame = frame_data['original']

model_output = frame_data['inference_output']

yolo_input_shape = (640, 640, 3)

boxes, scores, class_ids =

parse_yolo_output(model_output, frame.shape, yolo_input_shape)

class_labels = [yolo_classes[id] for id in class_ids]

for i in range(len(boxes)):

draw_bbox(frame, boxes[i], class_labels[i], scores[i])

frame_data['modified'] = frame

... (some other auxiliar functions that we call from the hook function)

The final step is to start Pipeless and provide a stream. To start Pipeless, simply run the following command from the project directory:

pipeless start --stages-dir .

Once running, let's provide a stream from the webcam (v4l2) and show the output directly on the screen, note we have to provide the list of stages that the stream should execute in order, in our case it is just the onnx-yolo stage:

pipeless add stream --input-uri "v4l2" --output-uri "screen" --frame-path "onnx-yolo"

And that's all!

Conclusion

We have described how creating a computer vision application is a complex task due to many factors and subsystems that we have to implement around it. However, with a framework like Pipeless, getting up and running takes just a few minutes and you can focus just on writing the code for your specific use case. Furthermore, Pipeless' stages are highly reusable and easy to maintain so the maintenance will be easy and you will be able to iterate very fast.

If you want to get involved with Pipeless and contribute to its development, you can do so through its GitHub repository at https://github.com/pipeless-ai/pipeless.

Don't forget to support its development by starring the repo!

History

- 27th December, 2023: Initial version