|

Is there an install.log file in the C:\Program Files\CodeProject\AI\modules\ObjectDetectionYolo folder you can share?

cheers

Chris Maunder

|

|

|

|

|

Installing CodeProject.AI Analysis Module

========================================================================

CodeProject.AI Installer

========================================================================

CUDA Present...True

Allowing GPU Support: Yes

Allowing CUDA Support: Yes

General CodeProject.AI setup

Creating Directories...Done

Installing module ObjectDetectionYolo

Installing python37 in C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37

Checking for python37 download...Present

Creating Virtual Environment...Python 3.7 Already present

Enabling our Virtual Environment...Done

Confirming we have Python 3.7...present

Ensuring Python package manager (pip) is installed...Done

Ensuring Python package manager (pip) is up to date...Done

Choosing Python packages from requirements.windows.cuda.txt

ERROR: Exception:

Traceback (most recent call last):

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\cli\base_command.py", line 169, in exc_logging_wrapper

status = run_func(*args)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\cli\req_command.py", line 248, in wrapper

return func(self, options, args)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\commands\install.py", line 378, in run

reqs, check_supported_wheels=not options.target_dir

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\resolver.py", line 93, in resolve

collected.requirements, max_rounds=limit_how_complex_resolution_can_be

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\resolvelib\resolvers.py", line 546, in resolve

state = resolution.resolve(requirements, max_rounds=max_rounds)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\resolvelib\resolvers.py", line 397, in resolve

self._add_to_criteria(self.state.criteria, r, parent=None)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\resolvelib\resolvers.py", line 173, in _add_to_criteria

if not criterion.candidates:

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\resolvelib\structs.py", line 156, in __bool__

return bool(self._sequence)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\found_candidates.py", line 155, in __bool__

return any(self)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\found_candidates.py", line 143, in <genexpr>

return (c for c in iterator if id(c) not in self._incompatible_ids)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\found_candidates.py", line 47, in _iter_built

candidate = func()

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\factory.py", line 211, in _make_candidate_from_link

version=version,

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\candidates.py", line 299, in __init__

version=version,

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\candidates.py", line 156, in __init__

self.dist = self._prepare()

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\candidates.py", line 225, in _prepare

dist = self._prepare_distribution()

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\resolution\resolvelib\candidates.py", line 304, in _prepare_distribution

return preparer.prepare_linked_requirement(self._ireq, parallel_builds=True)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\operations\prepare.py", line 516, in prepare_linked_requirement

return self._prepare_linked_requirement(req, parallel_builds)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\operations\prepare.py", line 593, in _prepare_linked_requirement

hashes,

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\operations\prepare.py", line 170, in unpack_url

hashes=hashes,

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\operations\prepare.py", line 107, in get_http_url

from_path, content_type = download(link, temp_dir.path)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\network\download.py", line 134, in __call__

resp = _http_get_download(self._session, link)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\network\download.py", line 117, in _http_get_download

resp = session.get(target_url, headers=HEADERS, stream=True)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\requests\sessions.py", line 600, in get

return self.request("GET", url, **kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_internal\network\session.py", line 517, in request

return super().request(method, url, *args, **kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\requests\sessions.py", line 587, in request

resp = self.send(prep, **send_kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\requests\sessions.py", line 701, in send

r = adapter.send(request, **kwargs)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\cachecontrol\adapter.py", line 57, in send

resp = super(CacheControlAdapter, self).send(request, **kw)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\requests\adapters.py", line 584, in send

return self.build_response(request, resp)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\cachecontrol\adapter.py", line 84, in build_response

request, response

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\cachecontrol\controller.py", line 410, in update_cached_response

cached_response = self.serializer.loads(request, self.cache.get(cache_url))

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\cachecontrol\serialize.py", line 95, in loads

return getattr(self, "_loads_v{}".format(ver))(request, data, body_file)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\cachecontrol\serialize.py", line 186, in _loads_v4

cached = msgpack.loads(data, raw=False)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\msgpack\fallback.py", line 125, in unpackb

ret = unpacker._unpack()

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\msgpack\fallback.py", line 590, in _unpack

ret[key] = self._unpack(EX_CONSTRUCT)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\msgpack\fallback.py", line 590, in _unpack

ret[key] = self._unpack(EX_CONSTRUCT)

File "C:\Program Files\CodeProject\AI\runtimes\bin\windows\python37\venv\lib\site-packages\pip\_vendor\msgpack\fallback.py", line 603, in _unpack

return bytes(obj)

MemoryError

Installing Packages into Virtual Environment...Success

Ensuring Python package manager (pip) is installed...Done

Ensuring Python package manager (pip) is up to date...Done

Choosing Python packages from requirements.txt

Installing Packages into Virtual Environment...Success

Downloading Standard YOLO models...Expanding...Done.

Downloading Custom YOLO models...Expanding...Done.

Module setup complete

Installer exited with code 0

|

|

|

|

|

Do you want it in another format?

|

|

|

|

|

Did you want me to send this somehow? I posted it on this thread earlier but maybe you wanted it a different way.

|

|

|

|

|

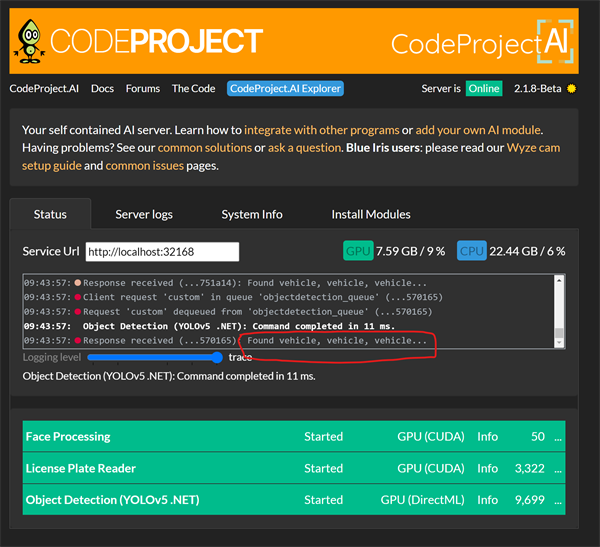

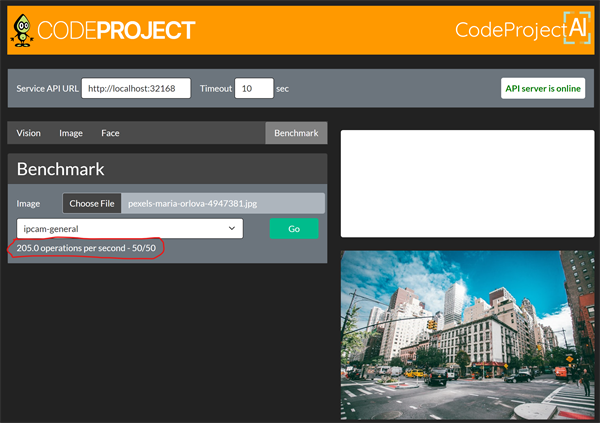

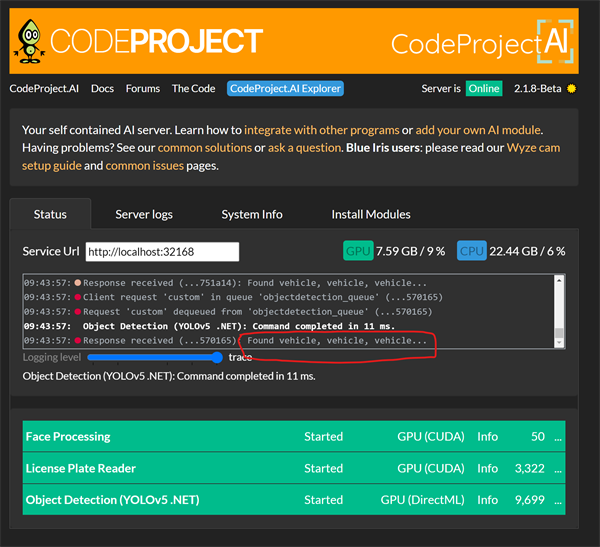

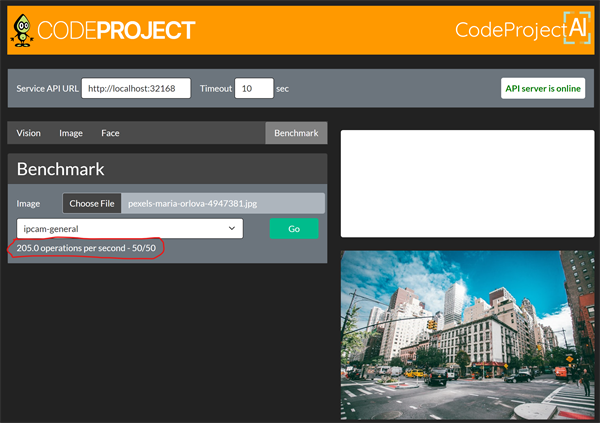

I am using the .net module now- it is kind of working but it is running my GPU at 100% all the time. I sure would like to get back to the 6.2 module if possible. Thanks!

|

|

|

|

|

i've tried to setup nvr agent with codeproject.al. i'm wondering if there is a log file that will tell me if a request was made to codeproject to detect an object, at a certain time and what was codeproject response. object = true or maybe object = false. sorry, really really newbie.

|

|

|

|

|

You can open the Dashboard and select the trace Logging level.

|

|

|

|

|

|

Hi all. I’m just wondering if this is a known bug or if it is something to look into.

This occurred whilst running 2.14 and now on 2.18 via docker with both the rpi64 and also the arm docker images. I have the usb coral stick installed on an rpi4/ odroid M1. I’m using Blue Iris on bare metal windows 10 machine. After several hours of use with <100ms detection times the detection times rise to >1000ms and no detections reported on Blue Iris. The problem is fixed by having to restart either the docker container or simply by restarting the TF-Lite module from the codeproject.ai server web page. After this detection times return to <100ms (I’m using the small model size and the sub stream for analysis) .

If it’s a known issue I’ll patiently wait but if you think it’s something wrong with my setup let me know!

|

|

|

|

|

I've not experienced this, but I'll add this one to our bug list and see if we can replicate / fix the issue.

Off the bat it sounds like a resource (memory?) depletion issue.

cheers

Chris Maunder

|

|

|

|

|

I am having exactly the same problem. It occurs after about 12 hours of use, detection times will rise to 1000ms from approximately 100ms. I have version 2.19 with docker on a raspberry pi 3. If I restart TF-lite using the "..." on the web interface, that solves the problem for another 12 hours or so before detection times rise again to 1000ms.

|

|

|

|

|

I've played around with it a little more leaving the logs at trace and this is what I've found out.

Memory remains pretty constant from the time it is <100ms through the time I notice it increase to >1000ms. So that doesn't suggest it's a memory issue. Specifically, when I view the memory usage through Portainer, the container for Code Proejct AI shows a constant usage of 700 MB

HOWEVER, I notice in the trace logs lots of: "The interpreter is in use. Please try again later."

Does any of this information help? Is there anything else that might help in resolving this issue?

Thank you

|

|

|

|

|

|

Sorry for this question, but can someone remind me where to see the detection time?

|

|

|

|

|

You can see them on the Dashboard Log. Also when using Explorer.

|

|

|

|

|

Thank you. I seem to also recall seeing the same info in Blue Iris.

|

|

|

|

|

Are you seeing the memory use increase over time? How many detections per second, minute, or hour are you processing?

cheers

Chris Maunder

|

|

|

|

|

I have restarted the Docker container and the Host device and the Coral TPU is now no-longer detected by 2.19-beta container. I have had to delete the TF-lite module and reinstall which seems to have worked.

Here is a snapshot of the log over 5 minutes I hope this helps estimate the number of detections/hr.

09:26:31:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...a742a5) took 59ms

09:26:45:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...3ba9b6) took 43ms

09:26:45:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...b7ef09) took 50ms

09:26:45:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...0076b0) took 45ms

09:26:45:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...d7d5f5) took 49ms

09:26:45:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...c89d7f) took 46ms

09:28:31:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...e56b12) took 59ms

09:28:45:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...a7015f) took 45ms

09:29:15:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...94ad42) took 49ms

09:29:32:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...cb1206) took 50ms

09:30:01:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...b3b5cf) took 171ms

09:30:31:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...ba2ca2) took 55ms

09:30:31:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...2a6a94) took 43ms

|

|

|

|

|

So I can confirm this still happens with my RPi 4 4GB version with 2.19Beta. The Coral TPU is still showing as connected in the CPAI webserver.

This is an example of the log output.

17:15:40:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...0c1c9c) took 1021ms

17:15:40:Response received (...0c1c9c): The interpreter is in use. Please try again later

17:16:18:Request 'detect' dequeued from 'objectdetection_queue' (...7d4f6c)

17:16:18:Client request 'detect' in queue 'objectdetection_queue' (...7d4f6c)

Memory Allocation:

total used free shared buff/cache available

Mem: 3794 649 153 24 2991 3069

Swap: 99 0 99

Restarting the TF-Lite module from within the CPAI web page again fixes this error.

|

|

|

|

|

Just wanted to confirm I'm also experiencing the TF-Lite detection time spike issue as previously discussed.

I'm running Version 2.1.9 on a Raspberry Pi 4 via Docker, and the same issue was present on Version 2.1.4 as well.

The detection time starts at 150-300ms but then increases to over 1000ms after a few hours and restarting the model or container only temporarily mitigate the problem.

In light of memory usage being highlighted as a possible culprit (as per Chris's suggestion), I switched to a 2 GB Pi variant. However, the problem persists, and memory usage seems unaffected, maintaining around 600MiB used, 600MiB free, and 700MiB cached. Interestingly, I'm not seeing a significant increase in CPU usage when detection time exceeds 1000ms, as one might expect if the CPU is compensating for the TPU.

Just adding my experience to the data pool here. Any additional insights would be much appreciated.

If I could provide any other information or check something else, please feel free to ask.

|

|

|

|

|

Any resolution to this? I am seeing the same; Have to restart everyday.

Server version: 2.1.8-Beta

Operating System: Linux (Linux 6.1.21-v8+ #1642 SMP PREEMPT Mon Apr 3 17:24:16 BST 2023)

CPUs: 1 CPU. (Arm64)

System RAM: 4 GiB

Target: Linux-Arm64

BuildConfig: Release

Execution Env: Docker

Runtime Env: Production

.NET framework: .NET 7.0.5

System GPU info:

GPU 3D Usage 0%

GPU RAM Usage 0

Video adapter info:

Global Environment variables:

CPAI_APPROOTPATH = /app

CPAI_PORT = 32168

Will add log next time incident repeats.

|

|

|

|

|

15:35:23:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:35:25:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...8e1d8e) took 1044ms

15:35:25:Response received (...8e1d8e): The interpreter is in use. Please try again later

15:35:25:Client request 'detect' in queue 'objectdetection_queue' (...6b2822)

15:35:25:Request 'detect' dequeued from 'objectdetection_queue' (...6b2822)

15:35:25:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:35:26:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...6b2822) took 1055ms

15:35:26:Response received (...6b2822): The interpreter is in use. Please try again later

15:35:27:Client request 'detect' in queue 'objectdetection_queue' (...17074b)

15:35:27:Request 'detect' dequeued from 'objectdetection_queue' (...17074b)

15:35:27:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:35:28:Client request 'Quit' in queue 'objectdetection_queue' (...f92f46)

15:35:28:Request 'Quit' dequeued from 'objectdetection_queue' (...f92f46)

15:35:28:Sending shutdown request to python3.9/ObjectDetectionTFLite

15:35:28:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:35:28:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...17074b) took 1070ms

15:35:28:Response received (...17074b): The interpreter is in use. Please try again later

15:35:30:Client request 'detect' in queue 'objectdetection_queue' (...42d81d)

15:35:30:Request 'detect' dequeued from 'objectdetection_queue' (...42d81d)

15:35:31:Client request 'detect' in queue 'objectdetection_queue' (...380d5d)

15:35:31:Client request 'detect' in queue 'objectdetection_queue' (...a50b13)

15:35:35:Client request 'detect' in queue 'objectdetection_queue' (...8350f0)

15:35:36:Client request 'detect' in queue 'objectdetection_queue' (...bb11d1)

15:35:36:Client request 'detect' in queue 'objectdetection_queue' (...eb4c78)

15:35:37:Client request 'detect' in queue 'objectdetection_queue' (...793b24)

15:35:41:Client request 'detect' in queue 'objectdetection_queue' (...34bc7f)

15:35:42:Client request 'detect' in queue 'objectdetection_queue' (...c33106)

15:35:46:Client request 'detect' in queue 'objectdetection_queue' (...4f1564)

15:35:47:Client request 'detect' in queue 'objectdetection_queue' (...8118f7)

15:35:51:Client request 'detect' in queue 'objectdetection_queue' (...8c5d82)

15:35:51:Client request 'detect' in queue 'objectdetection_queue' (...1f9738)

15:35:52:Client request 'detect' in queue 'objectdetection_queue' (...b836cd)

15:35:58:Client request 'detect' in queue 'objectdetection_queue' (...9cb8ab)

15:36:01:Forcing shutdown of python3.9/ObjectDetectionTFLite

15:36:01:Module ObjectDetectionTFLite has shutdown

15:36:01:GetCommandByRuntime: Runtime=python39, Location=Shared

15:36:01:Command: python3.9

15:36:01:Starting python3.9 "/app...nTFLite/objectdetection_tflite_adapter.py"

15:36:01:

15:36:01:Attempting to start ObjectDetectionTFLite with python3.9 "/app/preinstalled-modules/ObjectDetectionTFLite/objectdetection_tflite_adapter.py"

15:36:01:

15:36:01:Module 'ObjectDetection (TF-Lite)' (ID: ObjectDetectionTFLite)

15:36:01:Module Path: /app/preinstalled-modules/ObjectDetectionTFLite

15:36:01:AutoStart: True

15:36:01:Queue: objectdetection_queue

15:36:01:Platforms: windows,linux,linux-arm64,macos,macos-arm64

15:36:01:GPU: Support enabled

15:36:01:Parallelism: 0

15:36:01:Accelerator:

15:36:01:Half Precis.: enable

15:36:01:Runtime: python39

15:36:01:Runtime Loc: Shared

15:36:01:FilePath: objectdetection_tflite_adapter.py

15:36:01:Pre installed: True

15:36:01:Start pause: 1 sec

15:36:01:LogVerbosity:

15:36:01:Valid: True

15:36:01:Environment Variables

15:36:01:MODELS_DIR = %CURRENT_MODULE_PATH%/assets

15:36:01:MODEL_SIZE = Tiny

15:36:01:

15:36:01:Started ObjectDetection (TF-Lite) module

15:36:02:Running init for ObjectDetection (TF-Lite)

15:36:03:Client request 'detect' in queue 'objectdetection_queue' (...f0621d)

15:36:07:objectdetection_tflite_adapter.py: NUM_THREADS not found. Setting to default 1

15:36:07:objectdetection_tflite_adapter.py: MIN_CONFIDENCE not found. Setting to default 0.5

15:36:07:objectdetection_tflite_adapter.py: MODULE_PATH: /app/preinstalled-modules/ObjectDetectionTFLite

15:36:07:objectdetection_tflite_adapter.py: MODELS_DIR: /app/preinstalled-modules/ObjectDetectionTFLite/assets

15:36:07:objectdetection_tflite_adapter.py: MODEL_SIZE: small

15:36:07:objectdetection_tflite_adapter.py: CPU_MODEL_NAME: tf2_ssd_mobilenet_v2_coco17_ptq.tflite

15:36:07:objectdetection_tflite_adapter.py: TPU_MODEL_NAME: tf2_ssd_mobilenet_v2_coco17_ptq_edgetpu.tflite

15:36:07:objectdetection_tflite_adapter.py: Edge TPU detected

15:36:07:objectdetection_tflite_adapter.py: Timeout connecting to the server

15:36:07:objectdetection_tflite_adapter.py: ObjectDetection (TF-Lite) started.ObjectDetection (TF-Lite): ObjectDetection (TF-Lite) started.

15:36:07:objectdetection_tflite_adapter.py: Input details: {'name': 'serving_default_input:0', 'index': 0, 'shape': array([ 1, 300, 300, 3], dtype=int32), 'shape_signature': array([ 1, 300, 300, 3], dtype=int32), 'dtype': , 'quantization': (0.007843137718737125, 127), 'quantization_parameters': {'scales': array([0.00784314], dtype=float32), 'zero_points': array([127], dtype=int32), 'quantized_dimension': 0}, 'sparsity_parameters': {}}

15:36:07:objectdetection_tflite_adapter.py: Output details: {'name': 'StatefulPartitionedCall:3;StatefulPartitionedCall:2;StatefulPartitionedCall:1;StatefulPartitionedCall:02', 'index': 8, 'shape': array([ 1, 20], dtype=int32), 'shape_signature': array([ 1, 20], dtype=int32), 'dtype': , 'quantization': (0.0, 0), 'quantization_parameters': {'scales': array([], dtype=float32), 'zero_points': array([], dtype=int32), 'quantized_dimension': 0}, 'sparsity_parameters': {}}

15:36:07:ObjectDetection (TF-Lite): ObjectDetection (TF-Lite) started.

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...380d5d)

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...a50b13)

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...8350f0)

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...bb11d1)

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...eb4c78)

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...793b24)

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...34bc7f)

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...c33106)

15:36:07:Client request 'detect' in queue 'objectdetection_queue' (...f98921)

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...4f1564)

15:36:07:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:36:07:Request 'detect' dequeued from 'objectdetection_queue' (...8118f7)

15:36:07:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:36:07:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:36:07:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:36:08:Request 'detect' dequeued from 'objectdetection_queue' (...8c5d82)

15:36:08:Response received (...c33106): No objects found

15:36:08:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...c33106) took 230ms

15:36:08:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:36:08:Request 'detect' dequeued from 'objectdetection_queue' (...1f9738)

15:36:08:Response received (...34bc7f): Found person

15:36:08:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...34bc7f) took 288ms

15:36:08:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:36:08:Request 'detect' dequeued from 'objectdetection_queue' (...b836cd)

15:36:08:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...4f1564) took 138ms

15:36:08:Response received (...4f1564): Found person

15:36:08:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:36:08:Request 'detect' dequeued from 'objectdetection_queue' (...9cb8ab)

15:36:08:Response received (...8118f7): Found person

15:36:08:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...8118f7) took 142ms

15:36:08:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

15:36:08:Request 'detect' dequeued from 'objectdetection_queue' (...f0621d)

15:36:08:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...8c5d82) took 128ms

15:36:08:Response received (...8c5d82): Found person

|

|

|

|

|

Updated to 2.1.9-Beta and issue is still persistent about every 12 hours have to re-start TF-Lite module; reverting to deepstack - will try again in a few versions.

|

|

|

|

|

Having same issue. This has existed for me since initial release on pi4 (2.1.3?). Interestingly, before using with CodeProject on pi4 I tried with Scrypted on Windows and had same issue with it quitting working after a number of hours and requiring reloading the TPU. May be a bigger issue somehow.

00:50:44:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...c9a1f7) took 68ms

00:50:44:Response received (...c9a1f7): No objects found

00:50:46:Client request 'detect' in queue 'objectdetection_queue' (...4bdf35)

00:50:46:Request 'detect' dequeued from 'objectdetection_queue' (...4bdf35)

00:50:46:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

00:50:46:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...4bdf35) took 85ms

00:50:46:Response received (...4bdf35): No objects found

00:52:04:Request 'detect' dequeued from 'objectdetection_queue' (...335f69)

00:52:04:Client request 'detect' in queue 'objectdetection_queue' (...335f69)

00:52:04:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

00:52:41:Request 'detect' dequeued from 'objectdetection_queue' (...7c7201)

00:52:41:Client request 'detect' in queue 'objectdetection_queue' (...7c7201)

00:52:41:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

00:52:42:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...7c7201) took 1073ms

00:52:42:Response received (...7c7201): The interpreter is in use. Please try again later

00:52:44:Request 'detect' dequeued from 'objectdetection_queue' (...74bf42)

00:52:44:Client request 'detect' in queue 'objectdetection_queue' (...74bf42)

00:52:44:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

00:52:45:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...74bf42) took 1031ms

00:52:45:Response received (...74bf42): The interpreter is in use. Please try again later

00:52:46:Client request 'detect' in queue 'objectdetection_queue' (...eccb06)

00:52:46:Request 'detect' dequeued from 'objectdetection_queue' (...eccb06)

00:52:46:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

00:52:47:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...eccb06) took 1086ms

00:52:47:Response received (...eccb06): The interpreter is in use. Please try again later

00:54:04:Client request 'detect' in queue 'objectdetection_queue' (...97f7aa)

00:54:04:Request 'detect' dequeued from 'objectdetection_queue' (...97f7aa)

00:54:04:ObjectDetection (TF-Lite): Retrieved objectdetection_queue command

00:54:05:ObjectDetection (TF-Lite): Queue request for ObjectDetection (TF-Lite) command 'detect' (...97f7aa) took 1051ms

00:54:05:Response received (...97f7aa): The interpreter is in use. Please try again later

00:54:41:Client request 'detect' in queue 'objectdetection_queue' (...c9eda9)

00:54:41:Request 'detect' dequeued from 'objectdetection_queue' (...c9eda9)

|

|

|

|

|

Lots of this issue reported. Chris tried some fixes in later versions, but they didn't work. Here's a dupe issue: CDAI 2.1.9 Coral Dies after a while Pin[^]

Note that when the detection time goes up to that classic 1000ms, that means the Coral interpreter (whatever that is) is locked up and is not actually doing analysis anymore.

|

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin