While reviewing and refactoring a real-world codebase, I've noticed how byte[] API is misused. That is the reason why in this article I'm sharing some thoughts on why you shouldn't evade Stream API in your code.

Introduction

When working with files, there are often both APIs operating byte[] and Stream so quite often people chose byte[] counterpart as it requires less ceremony or just intuitively more clear.

You may think of this conclusion as far-fetched but I’ve decided to write about it after reviewing and refactoring some real-world production code. So you may find this simple trick neglected in your codebase as some other simple things I’ve mentioned in my previous articles.

Example

Let’s look at the example as simple as calculating file hash. In spite of its simplicity, some people believe that the only way to do it is to read the entire file into memory.

Experienced readers may have already foreseen a problem with such an approach. Let’s see do some benchmarking on 900MB file to see how the problem manifests and how we can circumvent it.

The baseline will be the naive solution of calculating hash from byte[] source:

public static Guid ComputeHash(byte[] data)

{

using HashAlgorithm algorithm = MD5.Create();

byte[] bytes = algorithm.ComputeHash(data);

return new Guid(bytes);

}

So following the advice from the title of the article, we’ll add another method that will accept Stream convert it to byte array and calculate hash.

public async static Task<Guid> ComputeHash(Stream stream, CancellationToken token)

{

var contents = await ConvertToBytes(stream, token);

return ComputeHash(contents);

}

private static async Task<byte[]> ConvertToBytes(Stream stream, CancellationToken token)

{

using var ms = new MemoryStream();

await stream.CopyToAsync(ms, token);

return ms.ToArray();

}

However, calculating hash from byte[] is not the only option. There’s also an overload that accepts Stream. Let’s use it.

public static Guid ComputeStream(Stream stream)

{

using HashAlgorithm algorithm = MD5.Create();

byte[] bytes = algorithm.ComputeHash(stream);

stream.Seek(0, SeekOrigin.Begin);

return new Guid(bytes);

}

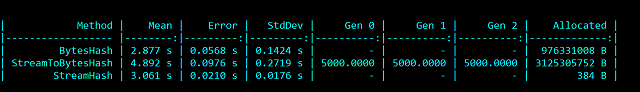

The results are quite telling. Although execution time is pretty similar, memory allocation varies dramatically.

So what happened here? Let’s have a look at ComputeHash implementation

public byte[] ComputeHash(Stream inputStream)

{

if (_disposed)

throw new ObjectDisposedException(null);

byte[] buffer = ArrayPool<byte>.Shared.Rent(4096);

int bytesRead;

int clearLimit = 0;

while ((bytesRead = inputStream.Read(buffer, 0, buffer.Length)) > 0)

{

if (bytesRead > clearLimit)

{

clearLimit = bytesRead;

}

HashCore(buffer, 0, bytesRead);

}

CryptographicOperations.ZeroMemory(buffer.AsSpan(0, clearLimit));

ArrayPool<byte>.Shared.Return(buffer, clearArray: false);

return CaptureHashCodeAndReinitialize();

}

While we tried to blindly follow the advice in the article, it didn’t help. The key takeaway from these figures is that using Stream allows us to process files in chunks instead of loading them into memory naively. While you may not notice this on small files but as soon as you have to deal with large files loading them into memory at once becomes quite costly.

Most .NET methods that work with byte[] already exhibit Stream counterpart so it shouldn’t be a problem to use it. When you provide your own API, you should consider supplying a method that operates with Stream in a robust batch-by-batch fashion.

Comparing streams

Imagine we want to write utility method that compares two streams. We could utilize the approach mentioned above: read chunks from the stream and compare these chunks. However, there are some dangers hidden in this approach. For some implementations of Stream such as NetworkStream the number of actual bytes read may differ from the expected number of bytes.

To combat this we’ll compare streams byte-by-byte.

public static bool IsEqual(this Stream stream, Stream otherStream)

{

if (stream is null || stream.Length == 0) return false;

if (otherStream is null || otherStream.Length == 0) return false;

if (stream.Length != otherStream.Length) return false;

int buffer;

int otherBuffer;

while (true)

{

buffer = stream.ReadByte();

if (buffer == -1) break;

otherBuffer = otherStream.ReadByte();

if (buffer != otherBuffer)

{

stream.Seek(0, SeekOrigin.Begin);

otherStream.Seek(0, SeekOrigin.Begin);

return false;

}

}

stream.Seek(0, SeekOrigin.Begin);

otherStream.Seek(0, SeekOrigin.Begin);

return true;

}

Conclusion

Stream APIs allow batch-by-batch processing which allows us to reduce memory consumption on big files. At the first glance, Stream API may seem as requiring more ceremony, it’s definitely a useful tool in one’s toolbox.

History

- 25th July, 2021 - Published initial version

- 9th August, 2021 - Updated stream comparison code according to comments

- 13th July, 2022 - Added reference source for more clarity

- 12th April, 2023 - Fixed stream comparison for network streams