So far, we learned to use AI in the web browser to track faces in real-time and to apply Deep Learning to detect and classify facial emotions. So here we put these two together and see if we can run emotion detection with a webcam in real time.

Introduction

Apps like Snapchat offer an amazing variety of face filters and lenses that let you overlay interesting things on your photos and videos. If you’ve ever given yourself virtual dog ears or a party hat, you know how much fun it can be!

Have you wondered how you’d create these kinds of filters from scratch? Well, now’s your chance to learn, all within your web browser! In this series, we’re going to see how to create Snapchat-style filters in the browser, train an AI model to understand facial expressions, and do even more using Tensorflow.js and face tracking.

You are welcome to download the demo of this project. You may need to enable WebGL in your web browser for performance. You can also download the code and files for this series.

We are assuming that you are familiar with JavaScript and HTML and have at least a basic understanding of neural networks. If you are new to TensorFlow.js, we recommend that you first check out this guide: Getting Started with Deep Learning in Your Browser Using TensorFlow.js.

If you would like to see more of what is possible in the web browser with TensorFlow.js, check out these AI series: Computer Vision with TensorFlow.js and Chatbots using TensorFlow.js.

So far, we learned to use AI in the web browser to track faces in real-time and to apply Deep Learning to detect and classify facial emotions. The next logical step would be to put these two together and see if we can run emotion detection with a webcam in real time. Let’s do this!

Adding Facial Emotion Detection

For this project, we will put our trained facial emotion detection model to the test with real-time video from the webcam. We’ll start with the starter template based on the final code from the face tracking project and modify it with parts of the facial emotion detection code.

Let’s load and use our pre-trained facial expression model. First, we will define some global variables for the emotion detection, just like we did before:

const emotions = [ "angry", "disgust", "fear", "happy", "neutral", "sad", "surprise" ];

let emotionModel = null;

Next, we can load the emotion detection model inside the async block:

(async () => {

...

model = await faceLandmarksDetection.load(

faceLandmarksDetection.SupportedPackages.mediapipeFacemesh

);

emotionModel = await tf.loadLayersModel( 'web/model/facemo.json' );

...

})();

And we can add a utility function to run the model prediction from key facial points like this:

async function predictEmotion( points ) {

let result = tf.tidy( () => {

const xs = tf.stack( [ tf.tensor1d( points ) ] );

return emotionModel.predict( xs );

});

let prediction = await result.data();

result.dispose();

let id = prediction.indexOf( Math.max( ...prediction ) );

return emotions[ id ];

}

Lastly, we need to grab the key facial points from the detection inside trackFace and pass them to the emotion predictor.

async function trackFace() {

...

let points = null;

faces.forEach( face => {

...

const features = [

"noseTip",

"leftCheek",

"rightCheek",

"leftEyeLower1", "leftEyeUpper1",

"rightEyeLower1", "rightEyeUpper1",

"leftEyebrowLower",

"rightEyebrowLower",

"lipsLowerInner",

"lipsUpperInner",

];

points = [];

features.forEach( feature => {

face.annotations[ feature ].forEach( x => {

points.push( ( x[ 0 ] - x1 ) / bWidth );

points.push( ( x[ 1 ] - y1 ) / bHeight );

});

});

});

if( points ) {

let emotion = await predictEmotion( points );

setText( `Detected: ${emotion}` );

}

else {

setText( "No Face" );

}

requestAnimationFrame( trackFace );

}

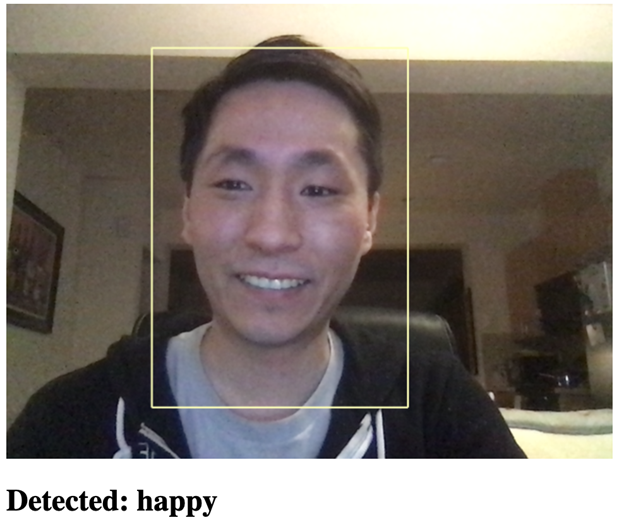

That’s all it takes to get this running. Now, when you open the web page, it should detect your face and recognize the different emotions. Experiment with it and have fun!

Finish Line

To wrap up this project, here is the full code:

<html>

<head>

<title>Real-Time Facial Emotion Detection</title>

<script src="https://cdn.jsdelivr.net/npm/@tensorflow/tfjs@2.4.0/dist/tf.min.js"></script>

<script src="https://cdn.jsdelivr.net/npm/@tensorflow-models/face-landmarks-detection@0.0.1/dist/face-landmarks-detection.js"></script>

</head>

<body>

<canvas id="output"></canvas>

<video id="webcam" playsinline style="

visibility: hidden;

width: auto;

height: auto;

">

</video>

<h1 id="status">Loading...</h1>

<script>

function setText( text ) {

document.getElementById( "status" ).innerText = text;

}

function drawLine( ctx, x1, y1, x2, y2 ) {

ctx.beginPath();

ctx.moveTo( x1, y1 );

ctx.lineTo( x2, y2 );

ctx.stroke();

}

async function setupWebcam() {

return new Promise( ( resolve, reject ) => {

const webcamElement = document.getElementById( "webcam" );

const navigatorAny = navigator;

navigator.getUserMedia = navigator.getUserMedia ||

navigatorAny.webkitGetUserMedia || navigatorAny.mozGetUserMedia ||

navigatorAny.msGetUserMedia;

if( navigator.getUserMedia ) {

navigator.getUserMedia( { video: true },

stream => {

webcamElement.srcObject = stream;

webcamElement.addEventListener( "loadeddata", resolve, false );

},

error => reject());

}

else {

reject();

}

});

}

const emotions = [ "angry", "disgust", "fear", "happy", "neutral", "sad", "surprise" ];

let emotionModel = null;

let output = null;

let model = null;

async function predictEmotion( points ) {

let result = tf.tidy( () => {

const xs = tf.stack( [ tf.tensor1d( points ) ] );

return emotionModel.predict( xs );

});

let prediction = await result.data();

result.dispose();

let id = prediction.indexOf( Math.max( ...prediction ) );

return emotions[ id ];

}

async function trackFace() {

const video = document.querySelector( "video" );

const faces = await model.estimateFaces( {

input: video,

returnTensors: false,

flipHorizontal: false,

});

output.drawImage(

video,

0, 0, video.width, video.height,

0, 0, video.width, video.height

);

let points = null;

faces.forEach( face => {

const x1 = face.boundingBox.topLeft[ 0 ];

const y1 = face.boundingBox.topLeft[ 1 ];

const x2 = face.boundingBox.bottomRight[ 0 ];

const y2 = face.boundingBox.bottomRight[ 1 ];

const bWidth = x2 - x1;

const bHeight = y2 - y1;

drawLine( output, x1, y1, x2, y1 );

drawLine( output, x2, y1, x2, y2 );

drawLine( output, x1, y2, x2, y2 );

drawLine( output, x1, y1, x1, y2 );

const features = [

"noseTip",

"leftCheek",

"rightCheek",

"leftEyeLower1", "leftEyeUpper1",

"rightEyeLower1", "rightEyeUpper1",

"leftEyebrowLower",

"rightEyebrowLower",

"lipsLowerInner",

"lipsUpperInner",

];

points = [];

features.forEach( feature => {

face.annotations[ feature ].forEach( x => {

points.push( ( x[ 0 ] - x1 ) / bWidth );

points.push( ( x[ 1 ] - y1 ) / bHeight );

});

});

});

if( points ) {

let emotion = await predictEmotion( points );

setText( `Detected: ${emotion}` );

}

else {

setText( "No Face" );

}

requestAnimationFrame( trackFace );

}

(async () => {

await setupWebcam();

const video = document.getElementById( "webcam" );

video.play();

let videoWidth = video.videoWidth;

let videoHeight = video.videoHeight;

video.width = videoWidth;

video.height = videoHeight;

let canvas = document.getElementById( "output" );

canvas.width = video.width;

canvas.height = video.height;

output = canvas.getContext( "2d" );

output.translate( canvas.width, 0 );

output.scale( -1, 1 );

output.fillStyle = "#fdffb6";

output.strokeStyle = "#fdffb6";

output.lineWidth = 2;

model = await faceLandmarksDetection.load(

faceLandmarksDetection.SupportedPackages.mediapipeFacemesh

);

emotionModel = await tf.loadLayersModel( 'web/model/facemo.json' );

setText( "Loaded!" );

trackFace();

})();

</script>

</body>

</html>

What’s Next? When Can We Wear Virtual Glasses?

Pulling code from the first two articles of this series allowed us to build a real-time facial emotion detector with just a bit of JavaScript. Imagine what else you could do with TensorFlow.js!

In the next article, we’ll get back to our goal of building a Snapchat-style face filter using what we have learned so far with face tracking and adding 3D rendering via ThreeJS. Stay tuned!